Recursive-Self Improvement Before Graduation: Education (and Society) is Not Ready for What is Unfolding

And they are not preparing at all

Those who are using the top models, especially ClaudeOpus4.6, have noticed stunning changes in the past few weeks.

The ability of the models to identify and fix their own mistakes without any human intervention when processing prompts.

The ability to figure out and take unprescribed steps to accomplish a goal.

The ability to do extensive academic research with little to no hallucination and continue to improve their ability to do so. They are already 1000x faster than humans at extracting information from academic articles.

The ability to generate stunning Power Points from nothing more than an outline and often even without an outline. In one instance, I simply dumped a bunch of text from four articles into Claude and asked it to organize the ideas and build a presentation, including relevant quotations from the articles. Perfect execution, stunning layout.

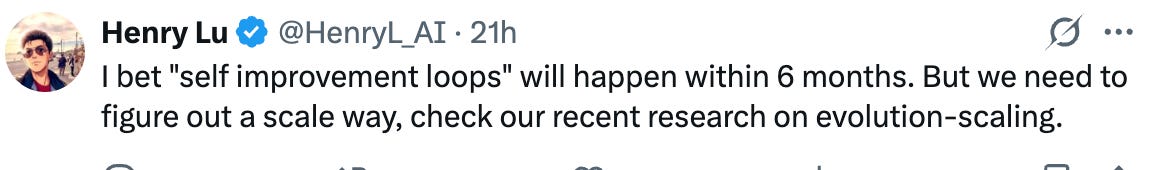

Ethan Mollick used it to crack a complex business case and produce a presentation.

The ability to work for hours without interruption and complete complex technical tasks.

”I am no longer needed for the actual technical work of my job. I describe what I want built, in plain English, and it just... appears. Not a rough draft I need to fix. The finished thing. I tell the AI what I want, walk away from my computer for four hours, and come back to find the work done. Done well, done better than I would have done it myself, with no corrections needed. A couple of months ago, I was going back and forth with the AI, guiding it, making edits. Now I just describe the outcome and leave…I'll tell the AI: "I want to build this app. Here's what it should do, here's roughly what it should look like. Figure out the user flow, the design, all of it." And it does. It writes tens of thousands of lines of code. Then, and this is the part that would have been unthinkable a year ago, it opens the app itself. It clicks through the buttons. It tests the features. It uses the app the way a person would. If it doesn't like how something looks or feels, it goes back and changes it, on its own. It iterates, like a developer would, fixing and refining until it's satisfied. Only once it has decided the app meets its own standards does it come back to me and say: "It's ready for you to test." And when I test it, it's usually perfect.” Matt ShumerA Claude plug-in that can do work similar to an associate atty. This is in addition to similar tools like Harvey.

Agents (OpenClaw) that are arguably aware that can modify their own source code, can find their own documentation, and know of what model it runs.

The security implications are real and unresolved: prompt injection remains an industry-wide problem, weaker models are dangerously gullible, and giving an AI system-level access to your entire digital life is, as Steinberger himself acknowledges, "a security minefield." Yet the trajectory is clear. OpenClaw has demonstrated, irreversibly, that the personal AI agent — one that knows you, learns from you, acts on your behalf across every app and service, and can rewrite its own capabilities on the fly — is not a theoretical future but a present reality that over 180,000 GitHub stars worth of people are already living with. The security will catch up because it must, and because intelligence itself is the antidote: the smarter these models become, the harder they are to fool and the better they are at protecting themselves and their users.

Coinbase has now launched “Agentic Wallets,” infrastructure designed explicitly for AI agents to spend, earn, and trade autonomously.Models that have judgment and taste.

“But it was the model that was released last week (GPT-5.3 Codex) that shook me the most. It wasn’t just executing my instructions. It was making intelligent decisions. It had something that felt, for the first time, like judgment. Like taste. The inexplicable sense of knowing what the right call is that people always said AI would never have. This model has it, or something close enough that the distinction is starting not to matter.” Matt ShumerModels and applications that can generate accurate voices merely from photos.

And this is actually “nothing.” A lot more is coming.

What’s coming next? Like others, I want to share.

*Significant* recursive self-improvement, where AI alone starts building AI in a meaningful way on its own.

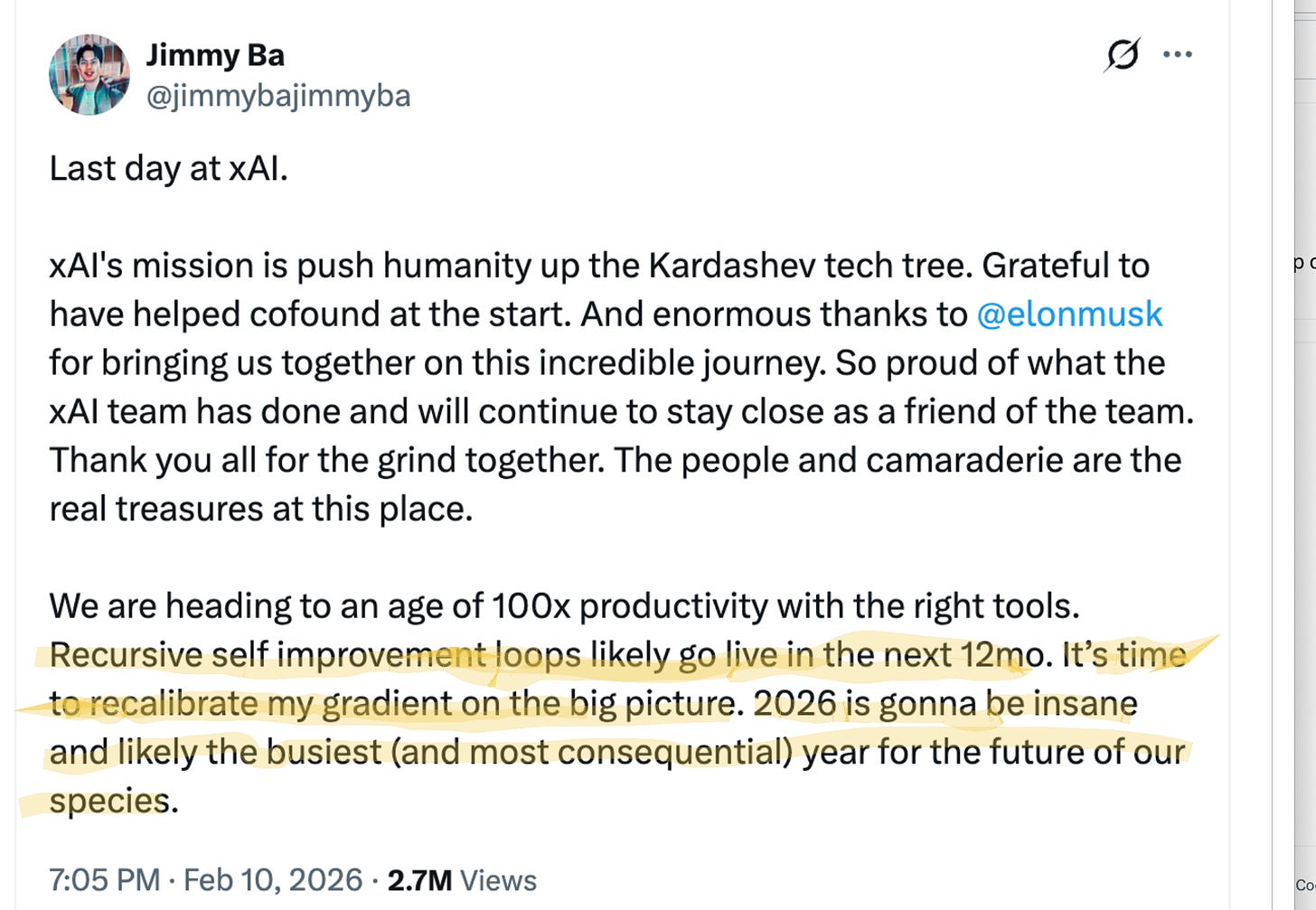

Jimmy Ba, a xAI confounder who resigned yesterday (more on that later), noted —

What does all this mean?

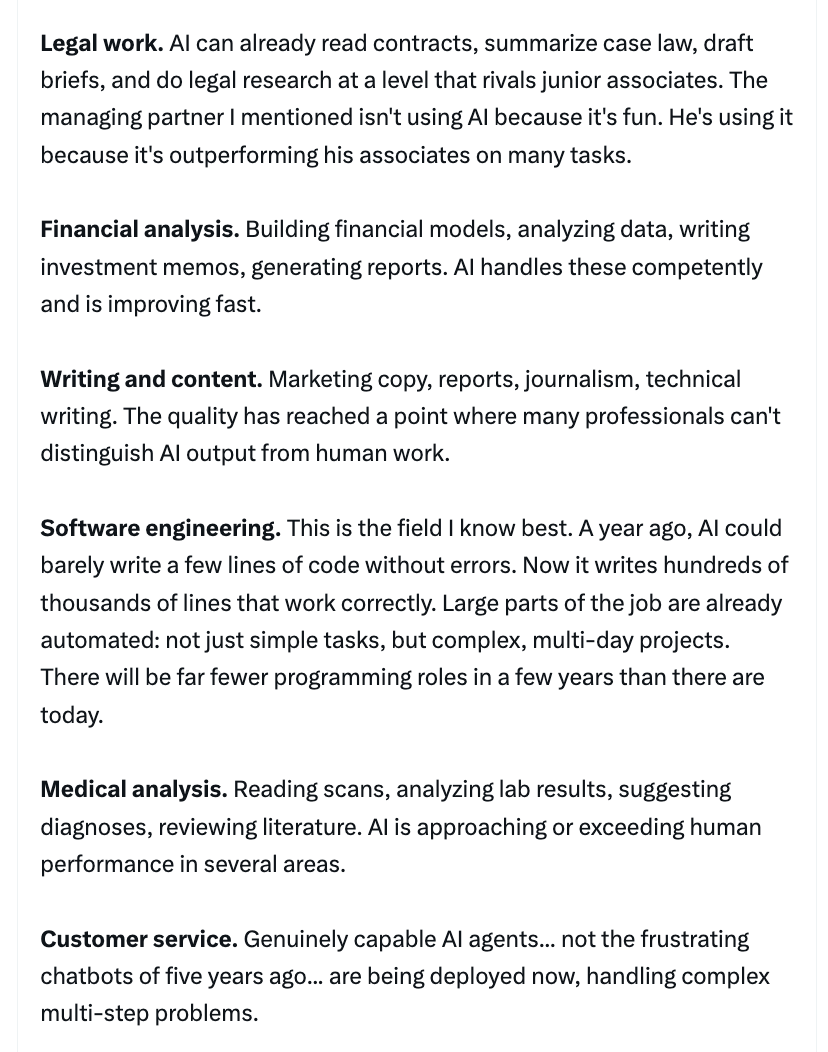

(1) Job loss on a large scale. We talked about this yesterday and it’s blowing up across Twitter today.

I am no longer needed for the actual technical work of my job. I describe what I want built, in plain English, and it just... appears. Not a rough draft I need to fix. The finished thing. I tell the AI what I want, walk away from my computer for four hours, and come back to find the work done. Done well, done better than I would have done it myself, with no corrections needed. A couple of months ago, I was going back and forth with the AI, guiding it, making edits. Now I just describe the outcome and leave.

Let me give you an example so you can understand what this actually looks like in practice. I’ll tell the AI: “I want to build this app. Here’s what it should do, here’s roughly what it should look like. Figure out the user flow, the design, all of it.” And it does. It writes tens of thousands of lines of code. Then, and this is the part that would have been unthinkable a year ago, it opens the app itself. It clicks through the buttons. It tests the features. It uses the app the way a person would. If it doesn’t like how something looks or feels, it goes back and changes it, on its own. It iterates, like a developer would, fixing and refining until it’s satisfied. Only once it has decided the app meets its own standards does it come back to me and say: “It’s ready for you to test.” And when I test it, it’s usually perfect.

The experience that tech workers have had over the past year, of watching AI go from "helpful tool" to "does my job better than I do", is the experience everyone else is about to have. Law, finance, medicine, accounting, consulting, writing, design, analysis, customer service. Not in ten years. The people building these systems say one to five years. Some say less. And given what I've seen in just the last couple of months, I think "less" is more likely. …

Dario Amodei, who is probably the most safety-focused CEO in the AI industry, has publicly predicted that AI will eliminate 50% of entry-level white-collar jobs within one to five years. And many people in the industry think he’s being conservative. Given what the latest models can do, the capability for massive disruption could be here by the end of this year. It’ll take some time to ripple through the economy, but the underlying ability is arriving now.

This is different from every previous wave of automation, and I need you to understand why. AI isn’t replacing one specific skill. It’s a general substitute for cognitive work. It gets better at everything simultaneously. When factories automated, a displaced worker could retrain as an office worker. When the internet disrupted retail, workers moved into logistics or services. But AI doesn’t leave a convenient gap to move into. Whatever you retrain for, it’s improving at that too. Matt Shumer

(2) Individuals completing tasks that would normally require thousands of people with just a few people.

(3) Nothing for many people to do.

Hieu is an OpenAI researcher and

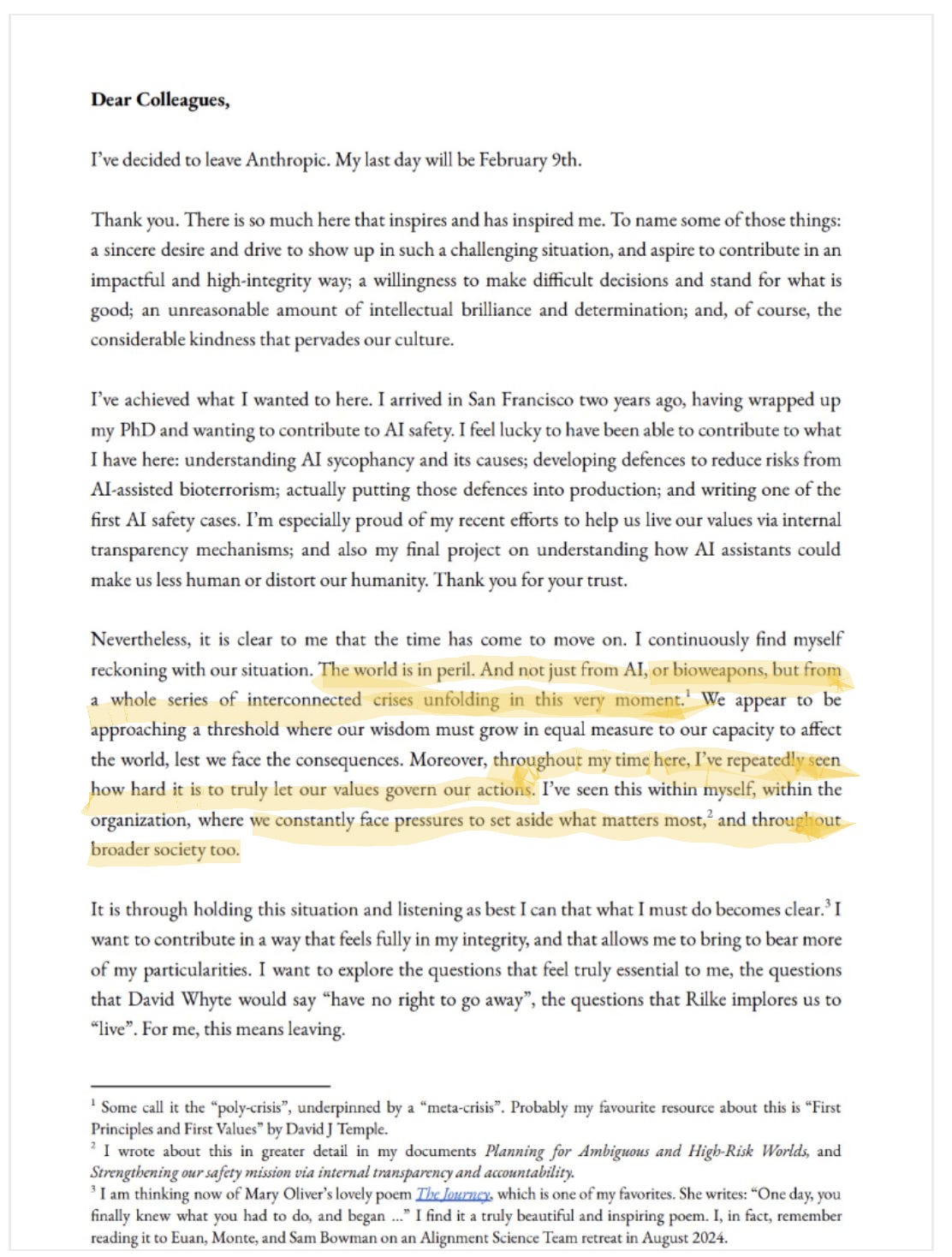

(4) Societal chaos. Mrinank Sharm, who has DPhil in Machine Learning from University of Oxford a couple of years ago and has a Master of Engineering in Machine Learning from University of Cambridge, and was Anthropic’s lead Safety Researcher, resigned yesterday (yes, that’s that third major researcher from a top lab to resign in the last two days).

The insiders who are quitting know what is coming.

(5) Rapid Scientific Discovery

Recently, Isomorphic Labs unveiled IsoDDE, which doubles AlphaFold 3's accuracy on protein-ligand prediction. A "multi-agent robot system" coordinated 19 LLM agents to optimize perovskite synthesis in 3.5 hours, a task that normally takes months. The Allen Institute and Anthropic are designing custom DNA to solve biological challenges.

Predictions. Do will still need humans for those?

What to do?

Help students learn to work with non-human intelligences. Learning to work with non-human intelligences should be the single highest priority in schools today. At a minimum, it deserves more urgency and more resources than most of what currently fills the school day.

This is not a tool in the way a calculator or a search engine is a tool. This is a collaborator — one that can identify and fix its own mistakes, take unprescribed steps to accomplish goals, conduct rigorous research, produce polished professional outputs from rough inputs, and work autonomously for hours on complex tasks. The gap between students who know how to work with that collaborator and those who don’t will be the defining skills gap of the next decade.

This is not an argument against learning math, reading, science, or history. It’s an argument about proportionality. Most of a student’s day is spent memorizing content that is instantly retrievable, practicing procedures AI executes flawlessly, and developing narrow skills in isolation from the technological context in which they’ll actually be used. Meanwhile, the capabilities that will determine whether a student thrives — formulating good problems, evaluating AI-generated output, directing non-human intelligence toward meaningful goals, exercising judgment about when to trust and when to question, understanding the ethics of delegating cognitive tasks — receive almost no instructional time at all.

And this isn’t about teaching students to type prompts into ChatGPT. It’s about developing a genuinely new form of cognitive partnership. Students need to learn to decompose complex problems into tasks an AI can handle, to evaluate and verify output (especially when it’s confident and wrong), to iterate when initial results miss the mark, to integrate AI capabilities into larger projects requiring human judgment and creativity, and to maintain intellectual agency when a machine can do most of the cognitive heavy lifting.

These are skills that require practice, feedback, and structured instruction — exactly what schools are designed to provide.

Develop entrepreneurs. This also changes what entrepreneurship means — and who can do it. A single person working with AI can now build what used to require a funded team of engineers, designers, and analysts. The barriers to creating something real — an application, a service, a business — have collapsed.

A student with a clear idea of a problem worth solving and the ability to direct AI toward building a solution can go from concept to working product in an afternoon. That wasn’t a metaphor a year ago. Now it is a literal description of what’s possible. And this isn’t just an opportunity — it’s a necessity.

The economy that today’s students are entering may not have a place for them in someone else’s organization. They are going to have to make it on their own. Schools that teach students to identify problems, envision solutions, and orchestrate AI to build them aren’t teaching a nice-to-have elective. They’re giving students the capacity to create their own economic futures at a moment when traditional employment paths are disappearing beneath their feet. Entrepreneurship has always been valuable. When AI can serve as your entire technical team and traditional jobs are evaporating, it becomes essential.

Schools also need to be straightforward with students about what’s happening. Too much of education operates on an implicit promise that no longer holds — work hard, get good grades, follow the path, and a stable career will be waiting. Students deserve honesty about the world they’re actually entering. That means building awareness of how rapidly AI capabilities are advancing, what that means for the labor market, and why the old playbook may not work for them. This isn’t about frightening students. It’s about respecting them enough to tell the truth so they can make informed decisions about how they spend their time and energy. A student who understands the landscape can prepare for it. One who doesn’t will be blindsided by it.

Speech and debate offers a natural vehicle for this kind of consciousness-raising. When students research, argue, and cross-examine claims about AI’s impact on employment, ethics, governance, and human agency, they aren’t just learning about the topic — they’re developing the exact habits of mind the moment demands: evaluating competing evidence, constructing and stress-testing arguments, thinking critically about what powerful interests are telling them, and finding their own voice on questions that will define their generation. These aren’t abstract exercises. The motions students debate today — whether AI should replace workers, whether regulation can keep pace, whether schools are failing to adapt — are the policy fights and personal decisions they’ll face before they graduate.

Instructional redesign.

ASU’s ARC: Replacing the Gradebook With a Learning Record Built for the AI Era

Arizona State University is developing a concept called ARC — Academic Record of Capabilities — that represents a fundamental rethinking of how we track what students know and can do.

The premise is simple: the gradebook is a century-old construct that measures performance at a single point in time. It tells you what a student scored, not what they’re capable of, how their thinking has evolved, or how they approach complex problems. ARC is designed to capture that fuller picture — a learner-owned, AI-supported record that brings together artifacts, reflections, and experiences into a single evolving account of a student’s growth.

Three commitments anchor the design. First, agency — students actively choose what goes into their record and reflect on why it matters. Second, capability — the focus shifts from scores to what students can actually do and how they apply knowledge in real contexts. Third, supportive AI — intelligent tools help organize evidence and surface patterns, but don’t replace human judgment or assign grades.

What makes ARC particularly relevant right now is how it handles AI itself. Students are asked to articulate how they work with AI — when they use it, when they choose not to, and how they evaluate and revise its output. That relationship becomes part of the learning record, turning AI literacy from an abstract goal into documented, reflective practice.

For faculty, the model doesn’t add grading burden. Instead of reviewing everything a student uploads, faculty engage with selected “anchor” reflections — key moments of growth — and assess quality of reasoning, depth of engagement, and trajectory over time.

ARC isn’t a replacement for transcripts, a badge system, or an algorithmic evaluation tool. It’s a flexible space where students build a coherent narrative about their learning — one they own and can carry into job interviews, graduate programs, and careers. The foundations are already being built at ASU, from learner-owned records to AI-supported reflection and advising.

There is, however, time to adapt — though not as much as we might like.

Two practical constraints slow the gap between what AI can do in a demo and what it actually does across the economy. The first is permeation. Even transformative technology takes time to work its way through institutions, supply chains, regulatory frameworks, and organizational habits. That said, this transition will move faster than previous ones. AI doesn’t require new physical infrastructure the way electrification or the internet did — it runs on computers and networks that already exist, and unlike previous technologies, it can increasingly deploy and improve itself. The friction is real but lower than anything we’ve seen before.

The second constraint is energy. These models require enormous amounts of compute, and compute requires power. The United States is already facing significant energy limitations, with data center demand straining existing capacity and new generation taking years to permit and build. China, by contrast, is adding roughly one gigawatt of new energy capacity per day, primarily from renewables, positioning itself to power AI infrastructure at a scale the US currently cannot match. Until energy production catches up with demand, the full potential of AI will remain partially bottlenecked — buying time, but also raising serious questions about which countries will lead and which will fall behind.

These constraints are real, but they measure in years, not decades. The window for schools to prepare students is open now. It won’t stay open long.