DeepMind's Blueprint for Measuring AGI Looks a Lot Like a Debate Round.

Debate trains every cognitive faculty that the world’s leading AI lab says defines general intelligence.

Every child who spends a school day listening without speaking, absorbing without generating, receiving without challenging, is a child whose cognitive development is being artificially constrained. We are leaving the most important faculties of human intelligence — the ones that will matter most in the AI age — on the table.

It’s time to stop thinking of debate as an extracurricular — a niche competitive activity for ambitious teenagers. Debate is a pedagogy. It is, arguably, the pedagogy for the AI age: the most efficient method we have for developing the full suite of cognitive capacities that will define human value in a world of increasingly capable machines. Not a kid’s activity. The future of teaching and learning itself.

Alongside the paper, DeepMind launched a Kaggle hackathon — “Measuring Progress Toward AGI: Cognitive Abilities” — offering $200,000 in prizes for researchers to build evaluations targeting five cognitive faculties where the measurement gap is largest: learning, metacognition, attention, executive functions, and social cognition. Read that list again. Those are precisely the faculties that debate trains most distinctively and that traditional schooling neglects most completely. Google is putting serious money on the table to figure out how to measure these capacities in AI systems, because they understand that what gets measured gets improved.

Now consider the conversation happening in education. For decades, educators and administrators have resisted scaling debate precisely because, they argue, these skills are too complex to assess, too subjective to benchmark, too difficult to standardize. Reasoning? Hard to measure. Metacognition? Impossible to test. Social cognition? Too squishy. Executive function? Not on the state exam. These are the excuses that have kept debate marginalized as an extracurricular oddity rather than recognized as core pedagogy.

Introduction

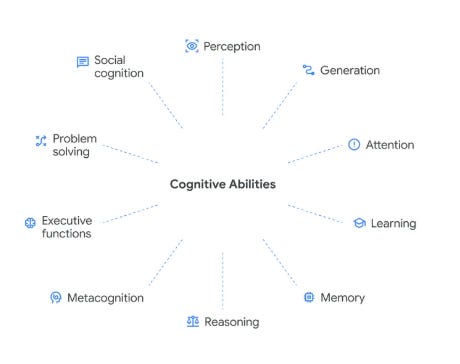

Yesterday, Google DeepMind dropped a paper that should stop every educator, policymaker, and parent in their tracks. “Measuring Progress Toward AGI: A Cognitive Framework” proposes a taxonomy of 10 cognitive faculties that, taken together, constitute general intelligence. The framework is meant to measure how close AI systems are to matching human cognition. But read it as an educator, and something else leaps off the page.

The 10 faculties DeepMind identifies — perception, generation, attention, learning, memory, reasoning, metacognition, executive functions, problem solving, and social cognition — are not just a map of the human mind. They are, almost perfectly, a map of what competitive debate trains.

This isn’t a coincidence. It’s a confirmation. And it has profound implications for how we prepare young people for a world where AI can do most cognitive work faster and cheaper than they can.

Here’s the uncomfortable part. The world’s largest technology companies are spending hundreds of billions of dollars — heading toward trillions — to build these 10 cognitive faculties into machines. Meanwhile, the one educational activity that systematically develops all 10 of them in human beings can barely survive as an after school activity and is devalued in classrooms. We are in the middle of history’s most expensive engineering project to replicate general intelligence in silicon, while underfunding the most effective tool we have for building it in our children.

The Cognitive Taxonomy, in Brief

The DeepMind team, led by Ryan Burnell and including luminaries like Shane Legg (who was one of the individuals who co-coined the term “AGI”) and Meredith Ringel Morris, drew from decades of research in psychology, neuroscience, and cognitive science to identify the building blocks of intelligence. Eight are foundational faculties — perception, generation, attention, learning, memory, reasoning, metacognition, and executive functions. Two are “composite” faculties that require orchestrating the others — problem solving and social cognition.

Their key insight: a system (artificial or human) with significant weaknesses in even one of these faculties will struggle with real-world tasks. General intelligence requires breadth. It requires all of these working together.

Now let’s walk through each one and show what debaters already know in their bones.

1. Perception: Reading the Room Before Reading the Evidence

DeepMind defines perception as the ability to extract and process sensory information from the environment — visual, auditory, and textual. Their taxonomy breaks this down into low-level processing (detecting features, segmenting words) and high-level understanding (scene comprehension, reading comprehension, listening comprehension).

Debate is a perceptual marathon. A debater must simultaneously process a judge’s nonverbal cues (Are they nodding? Writing? Frowning?), listen to an opponent’s speech at high speed while identifying the structure beneath the words, and rapidly read evidence cards, flowing arguments in real time across multiple sheets of paper. The Lincoln-Douglas debater scanning a complex philosophical text for the key warrant, the Policy debater tracking four off-case positions while an opponent spreads at 300 words per minute, the Public Forum debater reading the room to gauge how a community judge is reacting — all of these are exercises in multimodal perception under pressure.

DeepMind specifically highlights “multi-sensory integration” — the ability to combine information from multiple modalities simultaneously. That’s every cross-examination, where you’re reading your opponent’s body language while listening to their words while scanning your flow for the contradiction you noticed three minutes ago.

2. Generation: More Than Just Talking

Generation, in DeepMind’s framework, covers the production of outputs: text, speech, actions, and — fascinatingly — internal thoughts. They note that generation captures execution ability, not just the ability to decide what to do.

Debate is, at its core, a generation engine. Every speech is an act of real-time text and speech generation under severe time constraints. But the taxonomy’s nuance matters here. DeepMind breaks generation into grammatical correctness, lexical selection (choosing the right words), prosody control (rhythm, pitch, stress), and emotional expression.

Any debate coach will recognize this as the difference between a novice who reads evidence in a monotone and a varsity debater who modulates their voice to signal which arguments matter most, who selects precise language to frame a disadvantage link, who shifts register between the technical jargon of a policy debate and the accessible storytelling of a final focus.

The paper also includes “thought generation” — the ability to produce internal reasoning that guides decisions. This is the silent work of debate preparation, the mental simulation that happens in prep time: If I run this argument, they’ll respond with X, which means I need Y in my back pocket. DeepMind notes that conscious thought is critical for problem solving and that there is “substantial evidence for its value in AI systems.” Debate is where young people build this muscle.

3. Attention: The Scarce Resource

DeepMind identifies three dimensions of attention: capacity (how much you can track at once), selective/attentional control (focusing on what matters while ignoring what doesn’t), and stimulus-driven attention (responding to unexpected changes).

Debate trains all three relentlessly. Attention capacity is tested every time a debater must flow multiple arguments simultaneously. Selective attention is the discipline of ignoring an opponent’s rhetorical flourishes to focus on the logical structure underneath — or, conversely, the judge’s ability to tune out delivery quirks and focus on argument quality. Sustained attention means maintaining focus through an hour-long round or a full day of competition.

And stimulus-driven attention? That’s the moment in cross-examination when your opponent accidentally concedes a critical point and you need to pivot instantly. It’s the moment the judge asks an unexpected question in a congressional debate and you must redirect your entire framework on the fly.

DeepMind describes a “delicate balance” between narrow focus and environmental alertness. Debate is where students learn to hold that balance — to be deep in the weeds of an argument while remaining alert to the shifts happening around them.

4. Learning: Not Just Memorizing, Adapting

The taxonomy identifies multiple forms of learning: concept formation, associative learning, reinforcement learning, observational learning, procedural learning, and language learning.

Consider what a single debate season looks like through this lens. Students form new concepts (What is “fiat”? What is a “kritik”? What does “net benefits” mean as a decision calculus?). They learn by association (linking certain types of arguments to certain types of evidence, recognizing that certain judge paradigms correlate with certain voting patterns). They experience reinforcement learning with every ballot — wins and losses that reshape their strategic choices. They learn by observation, watching elimination rounds and emulating the techniques of successful debaters. They build procedural skills through the repetitive practice of flowing, timing, and extending arguments. And they learn new languages — not just the technical vocabulary of debate, but often the specialized discourse of economics, international relations, philosophy, or constitutional law.

DeepMind notes that truly robust systems must be able to “learn (and retain) new knowledge and skills over time.” Debate is one of the few educational activities that compresses this full spectrum of learning into a single semester.

5. Memory: The Architecture of Expertise

The taxonomy breaks memory into semantic memory (world knowledge), episodic memory (specific events), procedural memory (how to do things), prospective memory (remembering to do things later), and even forgetting (removing outdated information).

Debate builds extraordinary semantic memory — debaters develop deep domain knowledge across multiple fields, from nuclear proliferation to indigenous rights to trade policy, often within a single topic. They build episodic memory through the accumulation of rounds — remembering that a particular argument failed against a particular team at a particular tournament, and adjusting accordingly. Procedural memory is the autopilot of flowing, signposting, and road-mapping that frees cognitive resources for higher-order thinking. And prospective memory? That’s prep time: In my 2AR, I need to remember to extend the turn on the economy DA and kick the counterplan.

Perhaps most interestingly, DeepMind includes forgetting as a cognitive faculty — the ability to prune outdated or irrelevant information. Debaters know this instinct well. Between topics, you must let go of the specific evidence and case structures from last season to make cognitive room for the new resolution. Within a round, you must learn to drop arguments strategically — recognizing that a lower-priority contention isn’t worth extending, even if you have answers to it.

6. Reasoning: The Obvious One — But Deeper Than You Think

Reasoning is where most people would start the debate-cognition connection, and the taxonomy confirms why. DeepMind identifies deductive reasoning, inductive reasoning, abductive reasoning, analogical reasoning, and mathematical reasoning.

Debate deploys all of these, often within a single speech. Deductive reasoning is the structure of a well-built argument: if the premise is true, the conclusion follows necessarily. Inductive reasoning is what debaters use when generalizing from case studies — “Country X adopted this policy and saw Y result, therefore this policy tends to produce Y.” Abductive reasoning — generating the best explanation for a set of observations — is what happens when a debater reads the ballot after a loss and tries to figure out what went wrong.

Analogical reasoning deserves special attention. DeepMind defines it as identifying similarities between situations to draw conclusions about unknowns. This is the beating heart of debate argumentation — the ability to say, “This situation is structurally similar to that historical case, and here’s why the same logic applies.” It’s also the core of what makes debate transferable to everything from law to business to medicine.

7. Metacognition: Thinking About Your Thinking

This may be the most important faculty on the list — and the one debate trains most uniquely. DeepMind defines metacognition as a system’s knowledge of its own cognitive processes and its ability to monitor and control them. They break it into metacognitive knowledge (knowing your own strengths and limitations), metacognitive monitoring (evaluating your own performance in real time), and metacognitive control (adjusting strategies based on that monitoring).

Debate is an engine of metacognition. Every post-round reflection — “I lost because I didn’t extend the impact” — is metacognitive monitoring. Every strategic choice — “I’m better at technical debate than persuasive speaking, so I should run more theory arguments in front of flow judges” — is metacognitive knowledge applied. Every mid-round pivot — “This argument isn’t landing, I need to shift to a different framing” — is metacognitive control.

DeepMind specifically calls out confidence calibration (knowing how likely you are to be right), error monitoring (noticing your own mistakes), and learning strategy selection (choosing how to study based on what you know about your own gaps). Debate coaches will recognize these as the exact skills that separate good debaters from great ones — and, not coincidentally, the skills that transfer most powerfully to the rest of students’ lives.

But there’s a deeper metacognitive dimension that debate unlocks, and it connects directly to social cognition: cognitive empathy — the ability to understand another person’s perspective without necessarily agreeing with it. In debate, this isn’t a “nice-to-have” social skill. It is a tactical requirement. When a student is forced to switch sides — arguing for a carbon tax in Round 1 and against it in Round 2 — they are performing a high-level cognitive reset, deliberately dismantling their own argumentative framework and rebuilding it from the opposing foundation. This is metacognition in its most demanding form: not just monitoring your own thinking, but actively overriding it.

This is what I call the “switch-side advantage,” and it reveals a fundamental difference between how standardized schooling and debate develop the mind. Standardized schooling rewards answer seeking — finding the one right response and filling in the correct bubble. Debate rewards system mapping — understanding the entire landscape of an issue, including the strongest arguments against your own position. The consequences are profound. By arguing the “other” side, students develop intellectual humility. They realize that complex problems rarely have easy villains. This reduces the certainty bias that, in AI systems, manifests as hallucination, and in humans, manifests as polarization. And it builds predictive modeling: to win, a debater must construct a mental model of their opponent’s best possible argument. This is the peak of theory of mind — you aren’t just reacting to another intelligence, you are simulating it to find its weaknesses.

There’s a line often attributed to F. Scott Fitzgerald: the test of a first-rate intelligence is the ability to hold two opposing ideas in mind at the same time and still retain the ability to function. Debate is where students learn to pass that test — not as a literary aphorism, but as a lived cognitive practice. A skilled debater doesn’t just tolerate contradiction; they manage it. They can simultaneously believe that free trade increases aggregate wealth and that it devastates specific communities, and rather than collapsing into paralysis or retreating to one side, they learn to navigate that tension, weigh the tradeoffs, and make a reasoned case while fully understanding its vulnerabilities. This is not a skill most education even attempts to develop. Schools generally teach students to resolve ambiguity — to find the answer, pick a side, eliminate the wrong option. Debate teaches students to inhabit ambiguity, to sit with the discomfort of genuinely strong arguments on both sides of a question, and to think clearly anyway. In a world where AI systems confidently produce single answers to questions that don’t have single answers, the ability to hold complexity without flinching may be the most important cognitive skill we can cultivate.

8. Executive Functions: The Command Center

Executive functions, in the taxonomy, include goal setting and maintenance, planning, inhibitory control, cognitive flexibility, conflict resolution, and working memory.

Debate is essentially an executive function obstacle course. Goal setting happens before every round (What is my strategy? What must I win to take the ballot?). Planning is the architecture of every constructive speech and the strategic mapping of every rebuttal. Inhibitory control is the discipline of not responding to every argument — of letting go of the clever comeback that would waste time and instead focusing on what matters for the decision calculus.

Cognitive flexibility — the ability to switch between different modes of thinking — is tested every time a debater must pivot from an economic frame to a moral one, or from offense to defense, or from a policy discussion to a theoretical one about the rules of the activity itself. Conflict resolution (managing contradictory goals or competing responses) is what happens when a debater realizes their two best arguments are in tension with each other and must decide which to prioritize.

Working memory — manipulating information internally in service of a goal — is perhaps the most taxed cognitive faculty in any debate round. Holding your opponent’s argument structure in mind while constructing your response, while tracking the time, while anticipating the next speech, is a working memory workout that few other activities can match.

9. Problem Solving: The Composite Test

DeepMind designates problem solving as a “composite” faculty — it requires perception, reasoning, memory, planning, and execution all working together. They highlight fluid reasoning (solving novel problems), mathematical problem solving, algorithmic problem solving, commonsense problem solving, and knowledge discovery.

Debate is, at bottom, a problem-solving activity. Each resolution is a problem. Each round presents a novel instantiation of that problem — a new opponent, a new judge, a new argument you’ve never seen before. The debater must understand the problem, retrieve relevant knowledge, break it into sub-goals, plan a sequence of responses, and execute under time pressure. This is the DeepMind protocol, performed live, with an adversary actively trying to make it harder.

The paper notes that commonsense problem solving includes temporal reasoning, spatial reasoning, causal reasoning, and intuitive physics. Debate is heavy on the first three — understanding the sequencing of policy implementation, the geographic dimensions of international relations, and the causal chains that connect policy mechanisms to real-world outcomes.

And knowledge discovery — “the ability to generate novel hypotheses, experiments, and solutions”? That’s the debater who reads a new academic paper and sees an argument nobody else has run yet.

10. Social Cognition: The Faculty We Forget

The final faculty is social cognition — processing social information and responding appropriately. DeepMind includes social perception (reading facial expressions, tone, body language), theory of mind (reasoning about others’ mental states), and social skills (cooperation, negotiation, persuasion).

This is where debate’s educational value becomes undeniable. Theory of mind — the ability to model what your opponent believes, what the judge values, what the audience expects — is the foundation of strategic debate. You cannot construct an effective rebuttal without modeling your opponent’s thinking. You cannot persuade a judge without understanding their decision-making framework. You cannot conduct a productive cross-examination without predicting how your opponent will respond to different lines of questioning. This isn’t sentiment analysis. It isn’t pattern matching. It is the real-time simulation of another human mind — and the strategic exploitation of what that simulation reveals.

The taxonomy even lists negotiation, persuasion, and (notably) deception as social skills relevant to intelligence. Debate trains the first two explicitly and gives students frameworks for recognizing and responding to the third. The DeepMind team notes that persuasion “could be considered a harmful capability depending on the context.” Debate is the educational space where we teach persuasion within ethical bounds — with rules, with judges, with norms about evidence quality and honest argumentation.

The Gap Between Silicon and Soul

This brings us to a distinction the DeepMind paper gestures toward but doesn’t fully confront. While the technology industry is teaching AI to perform these 10 faculties, there is a fundamental difference in how machines and human debaters apply them. The comparison is instructive:

Metacognition. AI systems produce statistical confidence scores based on data probabilities. A debater performs real-time self-correction based on a judge’s facial expression — noticing a furrowed brow and pivoting mid-sentence to reframe an argument that isn’t landing.

Social Cognition. AI systems perform sentiment analysis and generate politely calibrated responses. A debater practices persuasion — the ability to move a human mind by finding shared values, building trust, and making an opponent’s position feel untenable from the inside out.

Executive Functions. AI systems manage task-token limits and prompt-chaining sequences. A debater performs crisis management — choosing which three arguments to drop when there are only 30 seconds left, making that judgment based on a holistic read of the round that no prompt chain could replicate.

The point isn’t that AI can’t do these things. The point is that how debate trains these faculties — under adversarial pressure, in real-time social contexts, with genuine stakes and genuine uncertainty — produces a kind of cognitive resilience that no benchmark can fully capture. Machines optimize these faculties for accuracy. Debate develops them for wisdom.

What This Means: The Case for Universal Basic Debate, Quantified

Here’s the punchline. DeepMind’s paper argues that a system with “significant weaknesses in one or more of these ten faculties is likely to be unable to perform some real-world tasks that most humans can perform.” In other words, general intelligence requires training across the full spectrum.

Now look at our schools. Which educational activities systematically train all 10 cognitive faculties?

Not lectures (primarily perception and memory). Not standardized tests (primarily reasoning and memory).

Debate is the closest thing we have to a comprehensive cognitive training program. It is, in effect, a gym for general intelligence.

What makes debate extraordinary isn’t just that it trains all 10 faculties. It’s that it forces their simultaneous deployment. A debater in the middle of a rebuttal is performing metacognition (monitoring whether their argument is landing), adversarial reasoning (anticipating the opponent’s next move), social modeling (reading the judge’s disposition), executive control (managing time and prioritizing arguments), memory retrieval (pulling relevant evidence), and generation (producing coherent speech) — all at the same time, all under the pressure of a ticking clock. No other common educational activity demands this kind of cognitive orchestration.

That simultaneity is the key. Because here’s what AI is going to do to the labor market, to civic life, to every domain of human endeavor: it is going to commoditize isolated cognition. Looking something up? AI does it faster. Summarizing a document? AI does it cheaper. Basic reasoning from premises to conclusions? AI is already competitive. The 10 faculties that DeepMind describes are, one by one, falling within reach of artificial systems. And when each faculty can be performed in isolation by a machine, the human who has only been trained to exercise them in isolation — one at a time, on a worksheet, in a quiet room — will find themselves outmatched.

What AI cannot yet do — and what may remain distinctively human for a long time — is exercise these faculties together, in the messy, high-stakes, socially embedded contexts where they actually matter. The capacities that will differentiate humans in an AI-saturated world are not lookup, summarization, or even basic analysis. They are judgment — the ability to weigh incommensurable values under uncertainty. They are framing — the ability to define the terms of a problem in ways that shape what counts as a solution. They are strategic thinking under uncertainty — making high-consequence decisions with incomplete information and adversarial pressure. And they are understanding other humans — modeling minds, reading rooms, building trust, and persuading people whose interests diverge from your own.

These are not individual skills that can be taught in separate units. They are emergent properties of a mind trained to operate across multiple cognitive faculties at once, under pressure, in real time. They are what debate produces.

Debate doesn’t just train these skills. It trains them together, under pressure, in real time, against adversarial opposition — which is exactly the condition in which they matter most.

It’s time to stop thinking of debate as an extracurricular — a niche competitive activity for ambitious teenagers. Debate is a pedagogy. It is, arguably, the pedagogy for the AI age: the most efficient method we have for developing the full suite of cognitive capacities that will define human value in a world of increasingly capable machines. Not a kid’s activity. The future of teaching and learning itself.

The Trillion-Dollar Irony

Let that sink in for a moment. Google, Microsoft, Meta, Amazon, OpenAI, Anthropic, and a growing constellation of startups and sovereign wealth funds are collectively spending hundreds of billions of dollars — trending toward trillions — to build artificial systems that can do what the DeepMind taxonomy describes. They are hiring the world’s best cognitive scientists, neuroscientists, and engineers. They are constructing massive data centers and designing novel chip architectures. They are pouring resources into perception models, reasoning engines, memory systems, metacognitive monitoring, and social intelligence. The entire thrust of the most capital-intensive technology race in human history is aimed at replicating, in silicon, the 10 cognitive faculties this paper identifies.

And yet.

Most American schools cannot find the funding for a debate coach. The activity that trains all 10 of these faculties — in actual human beings, the ones who will need to work alongside, govern, and make sense of these AI systems — survives on volunteer labor, booster club fundraising, and the stubbornness of a few dedicated educators. School boards cut debate programs to save $30,000 while tech companies raise $30 billion. Districts that can’t afford a debate coordinator serve students who will graduate into a labor market being reshaped by systems that cost hundreds of millions to train on the exact cognitive skills those students never got to develop.

The numbers are almost farcical. A single GPU cluster for training a frontier AI model costs more than the combined annual budgets of every high school debate program in the country. The compute budget for one large language model could fund a debate program in every public middle school in America — for years. We are building machines that can reason, persuade, and adapt, while defunding the only widely available educational activity that systematically teaches young humans to do the same.

And it gets worse. Alongside the paper, DeepMind launched a Kaggle hackathon — “Measuring Progress Toward AGI: Cognitive Abilities” — offering $200,000 in prizes for researchers to build evaluations targeting five cognitive faculties where the measurement gap is largest: learning, metacognition, attention, executive functions, and social cognition. Read that list again. Those are precisely the faculties that debate trains most distinctively and that traditional schooling neglects most completely. Google is putting serious money on the table to figure out how to measure these capacities in AI systems, because they understand that what gets measured gets improved.

Now consider the conversation happening in education. For decades, educators and administrators have resisted scaling debate precisely because, they argue, these skills are too complex to assess, too subjective to benchmark, too difficult to standardize. Reasoning? Hard to measure. Metacognition? Impossible to test. Social cognition? Too squishy. Executive function? Not on the state exam. These are the excuses that have kept debate marginalized as an extracurricular oddity rather than recognized as core pedagogy.

The technologists just called our bluff. They are not only measuring these capacities — they are building entire evaluation infrastructures around them, funding global competitions to refine those measurements, and treating the results as mission-critical benchmarks for the most important technology of our time. They are beating educators at our own life’s work. The cognitive scientists at DeepMind have done what schools of education have failed to do for generations: build a rigorous, empirical framework for assessing the full breadth of human intelligence — and then put money behind making it real. If Google can figure out how to benchmark metacognition and social cognition in a machine, surely we can figure out how to assess them in a seventeen-year-old.

This isn’t just a funding gap. It’s a civilizational misallocation. We are investing unprecedented resources in building artificial general intelligence while neglecting the most proven method we have for developing human general intelligence. The DeepMind paper makes the implicit case: if these 10 faculties define what it means to be cognitively capable, then any society serious about its future would invest in developing them in its people — not just in its machines.

The DeepMind paper concludes by calling for “a grounded, measurable scientific endeavor” to track progress toward AGI. I’d add a parallel call: a grounded, measurable educational endeavor to ensure that human beings develop the full spectrum of cognitive capabilities that define intelligence — before the machines do it for us.

But let me be clear about the scale of what I’m proposing, because this is where most advocacy for debate falls short. The goal cannot simply be for every school in America to have a debate team. That would be wonderful, but it would be insufficient. A debate team is still an extracurricular — a self-selected group of 15 or 30 students in a building of 1,500, meeting after school while the rest of the student body goes home.

The goal must be for every student in America — and ultimately, in the world — to be regularly speaking, arguing, and debating as a core part of their education. Not once a semester for a “debate unit.” Not as an elective for the kids who already like to talk. Every week. In every subject. As a foundational mode of learning.

Think about what school actually looks like for most students right now. They sit. They listen to a teacher talk. They read. They write. They take a test. The dominant modalities are passive reception and solitary production — perception and memory, in DeepMind’s terms, with occasional reasoning on a worksheet. The student’s voice, in many classrooms, is the thing that gets shushed. Speaking up is a disruption, not a pedagogy.

Now compare that to what the DeepMind taxonomy tells us intelligence actually requires. Generation — producing speech and text in real time. Social cognition — modeling other minds and responding to social dynamics. Metacognition — monitoring your own thinking and adjusting on the fly. Executive function — planning, inhibiting impulses, managing competing demands. These are not skills you develop by sitting quietly and absorbing information. They are skills you develop by doing — by speaking, by arguing, by defending a position and having it challenged, by listening to a counterargument and figuring out in real time whether it’s stronger than yours.

Debate-across-the-curriculum isn’t a radical idea. It’s what the science has been pointing toward for decades. The DeepMind paper just made the case in terms that the technology world — and hopefully, the policy world — can’t ignore. Every child who spends a school day listening without speaking, absorbing without generating, receiving without challenging, is a child whose cognitive development is being artificially constrained. We are leaving the most important faculties of human intelligence — the ones that will matter most in the AI age — on the table.

If we want our schools to develop general intelligence in humans — the real thing, not test scores, not GPAs, but the full-spectrum cognitive capability that the DeepMind taxonomy describes — then we need our classrooms to come alive. We need rooms full of students on their feet, making arguments, challenging each other, defending positions they built and positions they were assigned, thinking out loud and in public, failing and adjusting and trying again. Not quiet compliance. Not passive absorption. The hum and clash and energy of minds actively working against and with each other. That is what a classroom optimized for general intelligence looks like. It looks like a debate round.

We have the activity. We’ve had it for centuries. The science just caught up. The money just hasn’t. And the ambition — to make debate not a club, but a curriculum — hasn’t caught up either. It’s time.