Cultivating Human Superintelligence: Education’s New Mission:

We can do it with much of our existing curriculum if we prioritize the "Essential Curriculars"

TLDR

As industry pours trillions into building silicon minds that learn, argue, invent, and collaborate in order to produce an intelligence explosion, education risks falling behind. The divide stems less from unequal access to technology than from unequal opportunities within schools: some students learn to reason, collaborate, and co-create with AI, while others are pushed to rote tasks and focus on recall. Over time, these differences will create a divide that is not geopolitical but cognitive, separating humans and societies who co-create with intelligence and make good decisions from those who are displaced by it.

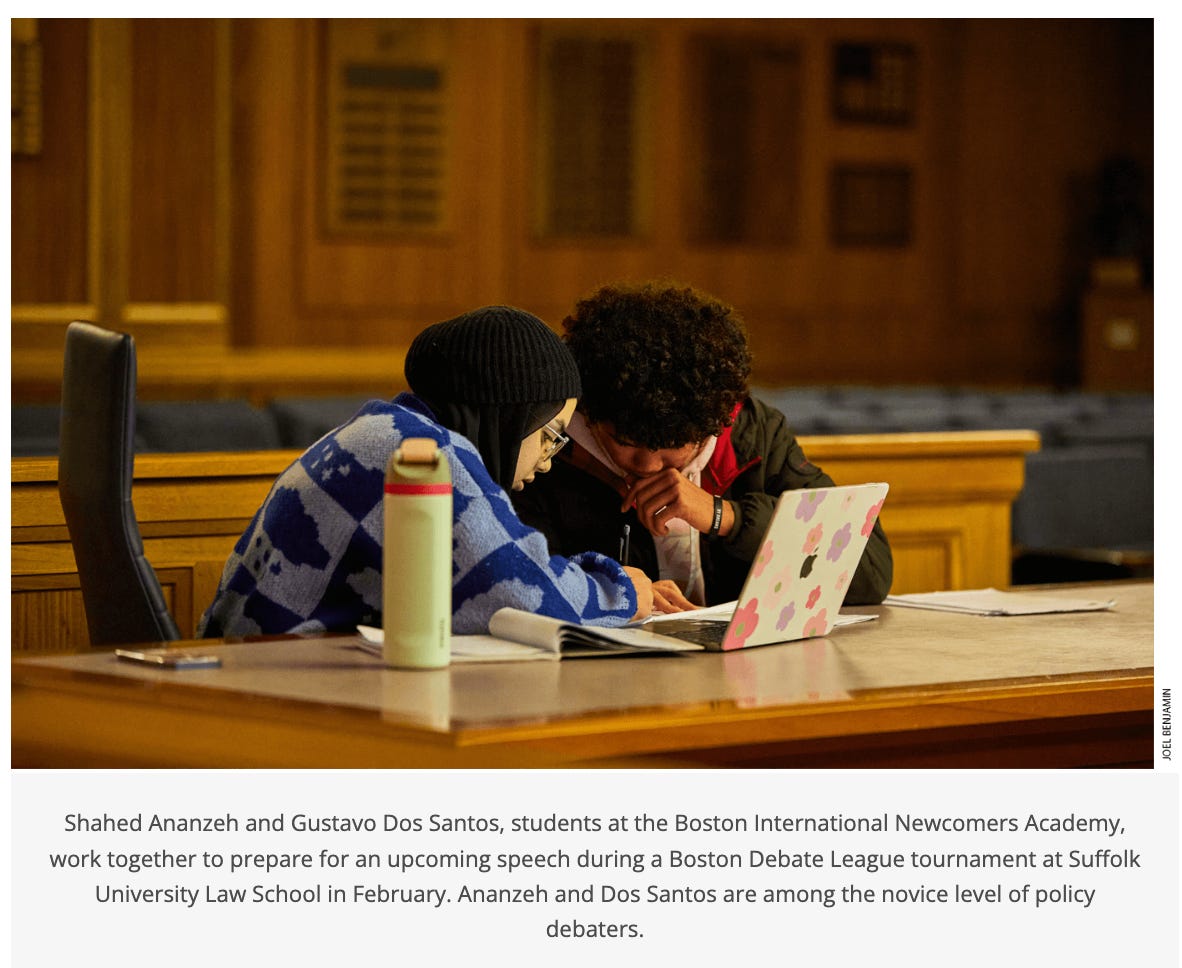

Fortunately, a blueprint already exists to overcome this divide. Debate, robotics, journalism, entrepreneurship, and theater programs cultivate the recursive, collaborative, and moral intelligence in humans needed to protect the interests and agency in an AI World. These so-called “extras” are the essential curriculars for a world of human superintelligence, and every community could have an Academic Superintelligence Center (ASIC) dedicated to growing it.

Schools could even find donors to support such centers. Wouldn’t you want your name on the place that helps the next generation develop Human Superintelligence?

Might it push you along if you knew the College Board seems headed in this direction?

The College Board is launching a new exploratory effort to design and scale innovative ways for students to build durable skills — the collaboration, problem-solving, and communication abilities that employers value and that students carry with them for life. ...This work sits at the intersection of education and the workforce... You’ll be part of a team creating something new: a program that brings the world of work into schools and helps students develop skills that last a lifetime. ...In this program, we aim for students to: Take on real business challenges from real employers, collaborate in small teams to analyze problems and propose solutions and gain firsthand experience working with professionals...

Trillions for Sillicon Superintelligence

Trillions of dollars, including $112 billion in the last 3 months alone, are now flowing into one goal: machine superintelligence..

Every major AI company on the planet — from Silicon Valley to Shenzhen — is racing to build systems that don’t just store or recall knowledge, but that learn (MIT), reason (NVIDIA), remember (Deep Mind), evolve (Novikov et al), discover (Tao et al), and collaborate (Nourzad et al).

In “Self Play and Autocurricula in the Age of Agents,” Rohan Virani explains ow AI systems now train themselves through self-play, generating an autocurriculum — a sequence of tasks that continually stretch the agent just beyond its current ability. “DreamGym” (Chen et al) gives AI agents a “training gym” where they can learn through self-generated experiences, adapting tasks and feedback dynamically.

The outlines of this race are already visible:

At Microsoft AI, Mustafa Suleyman’s Superintelligence Team is pursuing “Humanist Superintelligence.” The focus is on medical superintellligence, with Suleyman claiming they have a line of sight to medical superintelligence in 2-3 years. See also: MAI-DxO.

At Meta, Mark Zuckerberg has reorganized divisions under Superintelligence Labs.

OpenAI has shifted its focus from general intelligence to superintelligence alignment.

Anthropic predicts their models “surpass human capabilities in almost everything” within 2-3 years.

Safe Superintelligence Inc. (SSI), founded by Ilya Sutskever, exists for this one purpose.

China’s leading labs (Baidu, Kimi Thinking 2 (“adaptive reasoning”), Zhipu AI, are moving just as quickly toward collective superintelligence and they are open-sourcing the models.

This superintelligence is no longer a speculative concept. It’s an engineering pipeline that is being scaled with budgets larger than some nations’ GDPs. NVIDIA’s assets are worth $5 trillion. So are Saudi Arabia’s assets (Diamandis et al).

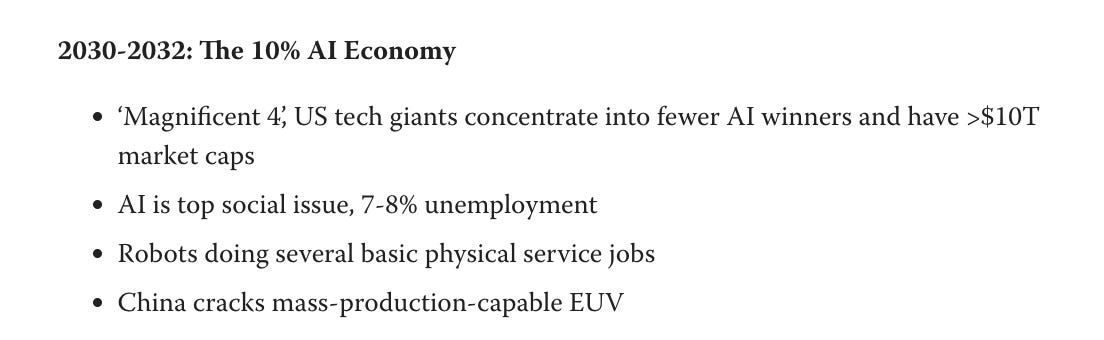

Most of the top researches place its arrival in the early 2030s, though some are a bit sooner and some are later. In the end, it doesn’t really matter, as accelerating AI capabilities will have enormous economic and social implications well before we get there. It’s already impacting the economy.

Companies and many governments are preparing for it. AGI was mentioned 53 per cent more times in companies’ earnings calls in the first quarter of 2025 than in the same period in the previous year.

What percentage of schools are preparing their students for even a potential “AGIish” reality?

What about a world AI can just invent and improve mathematical ideas, generating and testing solutions until it finds the best ones (Georgeiev et al)?

What about a world where mathematical discovery is made at scale (Tao)?

What about a world where (humanoid) robotics advances at exponential rates through vision language action models and billions of hours of synthetic training data? Robots so real that companies have to prove they aren’t fake.

If you were to ask your child’s school what they are doing to prepare your child for a world of machine superintelligence, what would they say?

Companies Develop Intelligences We Often Ignore

The world’s most advanced models are being optimized for continuous learning. They don’t merely output answers; they learn from feedback. They refine reasoning through conversation. They evolve with every prompt.

OpenAI’s latest prototypes literally “watch themselves think” (aka, metcognition). Most models now argue with themselves before responding. Microsoft and others are chaining multiple agents together in dynamic reasoning loops that mimic collaborative cognition. We are, in real time, watching machines learn how to learn, argue to understand, and invent by interacting.

On the other hand, a lot of education today is obsessed with what I think of as narrow intelligence — primarily rule-following, answer-getting, standardized performance. Students competing against each other for ranking on knowledge acquisition.

Students spend months preparing for tests like the SAT, ACT, or state assessments where success depends on memorizing formulas, practicing test-taking strategies, and selecting the “right” answer under time pressure — not on reasoning, creativity, or ethical judgment. Essays graded by rubrics that reward rigid structures (“five-paragraph essays”) and penalize unconventional thinking. Curves and GPA rankings pit students against one another for scarce “A” grades, encouraging optimization and gaming the system rather than collaboration or deep understanding.

That was useful when knowledge was scarce. That advantage is worth less when knowledge is ambient and many ranking differentials can be instantly offset by AI.

But we keep racing to the bottom of the cognitive pyramid, refining and automating the kind of intelligence that machines excel at and are already getting closer to mastering and moving beyond. The irony is breathtaking:

Machines are learning to reason through exchange in collaboration with many AIs.

Students are punished for collaborating, pushed to do their “own” work.

What the AI labs are calling emergence — the spontaneous appearance of new capabilities through interaction — is what is essential as machine intelligence evolves. Yet true human intelligence has always been emergent. It arises in friction through interaction, not conformity. In conversation, not compliance.

This is the paradox at the heart of 21st-century education: as machine learning grows deeper, human learning grows thinner.

Meanwhile, in the EdTech World

Parts of the EdTech sector is booming — not to revolutionize learning, but to make traditional education cheaper, faster, and more efficient. Platforms like Quizlet, IXL, or Khan Academy often reward speed and accuracy on repetitive questions. They reinforce recall and rule-application but rarely invite students to question, connect, or create. Adaptive learning systems promise “personalized mastery.” Automated tutors promise “AI-powered practice.” But the real product is the same: compliance, repetition, and optimization of the known.

It locks-in the status quo, assuring the silicon intelligence outpaces us intellectually.

In many classrooms and learning platforms, students are no longer learning how to think—they’re learning how to press the right buttons to trigger machine approval. The LMS has replaced the mind as the locus of control.

Standardization and Sorting The Deplorables

For more than a century, education has functioned less as a system for cultivating potential and more as a ranking machine—standardizing what every student learns, testing how well they can repeat it, and sorting them into hierarchies of “ability.” This structure didn’t just limit individual opportunity; it likely slowed our evolution as a species. By rewarding conformity over curiosity, we suppressed the very diversity of thought that drives creative invention, moral progress, and collective intelligence. Standardized curricula turned learning into a filter rather than a force for emergence—optimizing for efficiency instead of imagination.

The standardization of curriculum in the early 20th century wasn’t presented as a sorting mechanism—it was sold as democratization. Every child, regardless of background, would learn the same things, take the same tests, be measured by the same standards. Finally, merit could be objectively assessed.

But standardization contains an inherent contradiction: the moment you make everyone learn identical content and measure them against a single scale, you haven’t created equality—you’ve created a ranking system. And rankings, by definition, require winners and losers. The curriculum became a uniform filter designed to separate “the capable” from “the deficient,” with each grade level, each test, each tracking decision functioning as another gate that closed behind those who didn’t conform to its narrow definition of intelligence.

The education system didn’t just rank students—it created a comprehensive class taxonomy that determined who deserved resources, respect, and a voice in shaping the future. For decades, this sorting mechanism functioned as society’s primary legitimation tool: those who excelled within its narrow parameters were designated “valuable”—credentialed, deserving of high incomes, cultural authority, and political power. Those who didn’t were implicitly marked as deficient, their struggles reframed as personal failures rather than systemic design flaws.

This created a credentialed elite that increasingly believed its own mythology—that educational attainment reflected inherent merit rather than successful navigation of an arbitrary filtering system. As globalization and automation decimated industries, this elite doubled down on education as the solution: “Learn to code. Go to college. Adapt.” The subtext was clear: if you’re struggling, you didn’t work hard enough, weren’t smart enough, didn’t matter enough.

When Hillary Clinton’s “basket of deplorables” comment surfaced in 2016, it crystallized what millions already felt—that they’d been sorted into society’s discard pile by people who viewed them with contempt. The comment wasn’t an aberration; it revealed the logical endpoint of a century-long sorting system. If education determines human value, and you “failed” within that system, then you are deplorable—irredeemable, disposable, unworthy of empathy or economic security.

Modern populism—left (Mamdani) and right (Trump) —is fundamentally a revolt against this sorting regime and the extraction it enables. When people support figures who promise to “burn it all down,” they’re not confused or irrational. They’re responding to a rigged taxonomy that:

Declared them worthless through educational credentialism

Blamed them for structural failures (deindustrialization, wage stagnation, healthcare collapse)

Extracted wealth from their communities while lecturing them about “disruption” and “creative destruction”

Denied them dignity by treating their concerns as pathology rather than legitimate grievance

The Solution: A Call for Human Superintelligence

“Parts of machines will supersede human intelligence… Machine-based intelligence will do a lot of powerful things, but there is a profound place for human intelligence to always be critical in our human society.” — Fei Fei Li, founder of World Labs

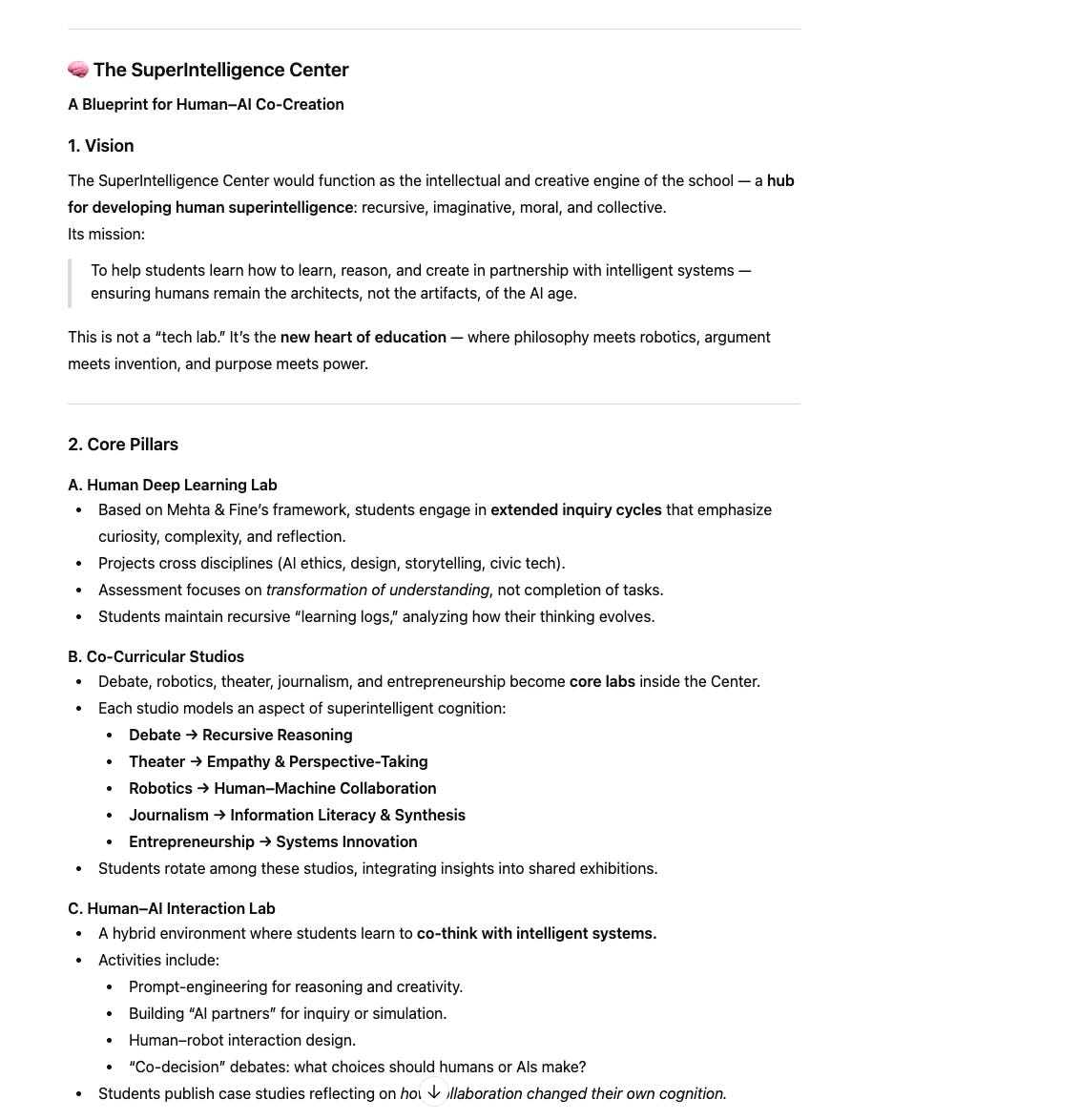

If companies are going to build superintelligence in machines, we cultivate superintelligence in students.

That means cultivating recursive learners who grow through curiosity, collaboration, and self-reflection. It means treating education as a system of emergence, not efficiency. It means shifting from “What do students know?” to “What can students become?”

The future will not be written by those who memorize the most. It will be written by those who imagine the most. By those who collaborate with all intelligences, human and machine.

Mehta & Fine call it human deep learning — the kind of learning that reorganizes the mind rather than just filling it. It’s recursive, reflective, and social. We unpacked it in great detail in Humanity Amplified.

Ironically, humans have been capable of this for millennia. We just stopped teaching it. We used to call it philosophy. We used to call it debate. We used to call it invention. We used to call it entrepreneurship.

It’s what happens when students argue through complexity, build things that can fail, and learn from that failure. That is human superintelligence. It’s what we need to make sure our students develop.

“Friction,” what everyone talks about these days, emerges in the moments when learning becomes shared — when students must navigate the unpredictable dynamics of collaboration, judgment, and discernment. It arises when a group must make sense of conflicting ideas, divide roles, or defend competing visions, not when a single student sits at a desk writing a paper while no human or machine helps.

In that friction, students encounter ambiguity and disagreement, which force them to listen, adapt, and refine their thinking. These are the crucibles of human deep learning: the design challenge that fails three times before it works, the debate where every argument reshapes a belief, the group project that demands not just answers but decisions. Friction is the medium where intelligence evolves through interaction,

Five Design Moves Toward a SuperIntelligent Education

Here is a road map for building it:

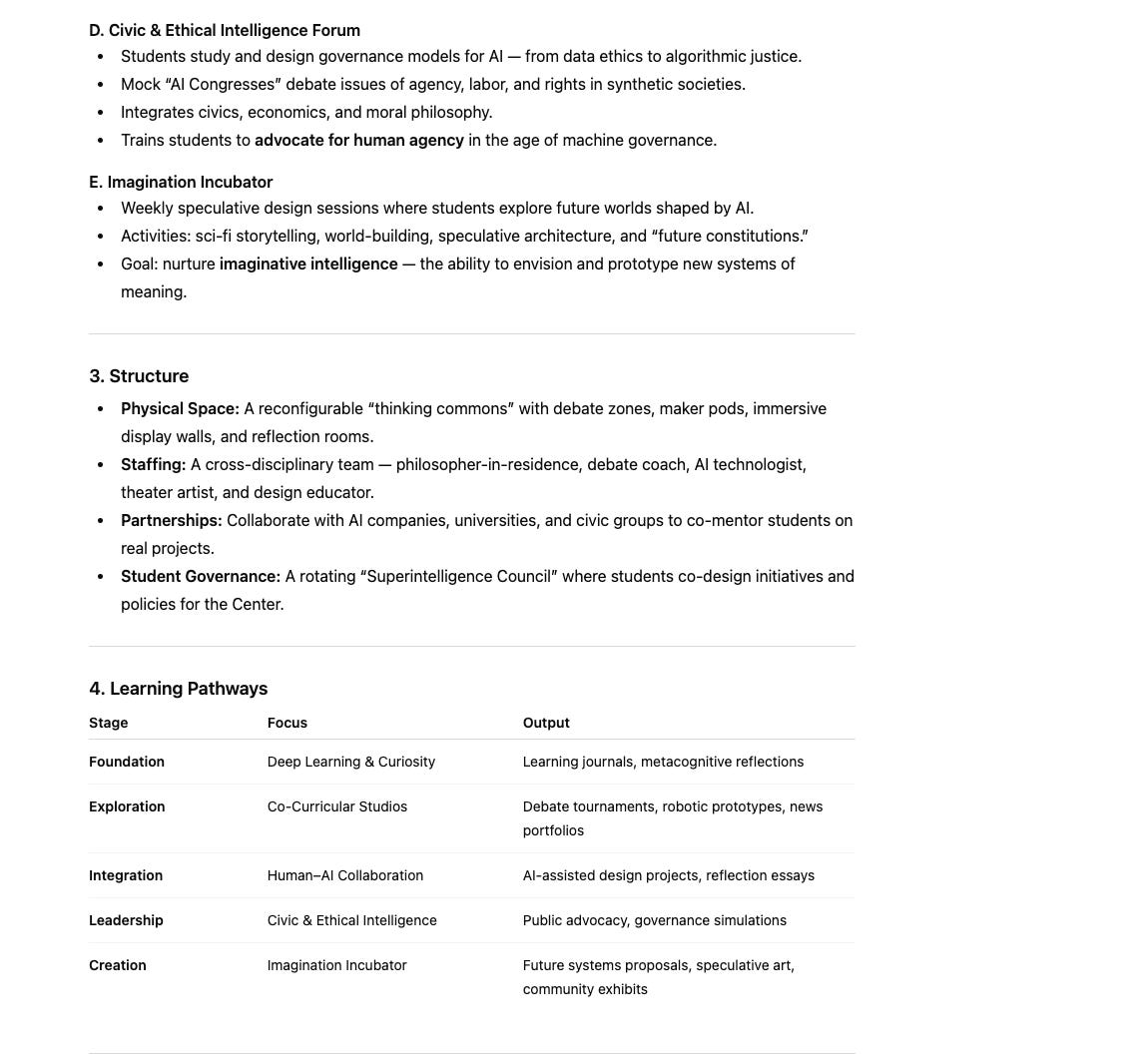

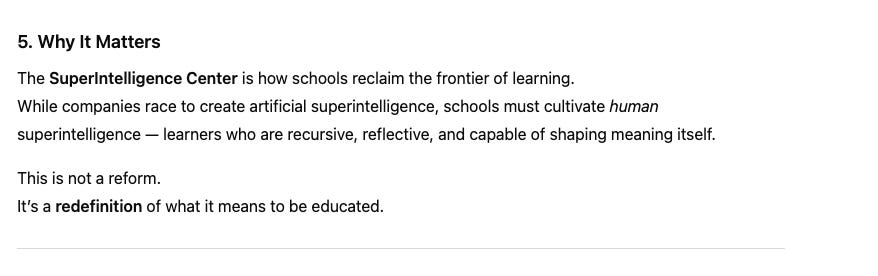

When Co-Curriculars Become the Essential Curriculum

Principle: The learning that best prepares students often happens outside the classroom, so let’s bring it in.

What it looks like: Programs like debate, robotics, journalism, entrepreneurship, and theater become central to the academic schedule, counting as core credits.

Why it matters: Co-curriculars are living laboratories of intelligence. They mirror the very architectures that define advanced AI — dialogue, iteration, simulation, and feedback.

Design Recursive Classrooms

Principle: Learning how to learn must be the central curriculum.

What it looks like: Students routinely audit their own thinking. Teachers model metacognitive dialogue. Feedback loops and revision cycles replace grades as the main driver of growth.

Why it matters: Recursive learning mirrors how AIs improve themselves. It turns students from knowledge consumers into knowledge engineers of their own minds.

Engineer Emergent Learning Systems

Principle: Treat the classroom as a living network, not a factory.

What it looks like: Interdisciplinary “challenge cycles” where students design solutions to open-ended problems. AI tools are partners for idea generation, not shortcuts for answers.

Why it matters: Emergent learning builds cognitive flexibility — the very skill AIs lack but depend on humans for.

Cultivate Imaginative Intelligence

Principle: In a world where machines can calculate, imagination becomes the highest form of literacy.

What it looks like: Weekly “imagination labs” for speculative design. Debate and philosophy infused across disciplines to strengthen moral reasoning.

Why it matters: Imagination is how we prototype the future. Superintelligent education must train not only critical thinkers, but creative architects.

Human–AI Collaboration and Robotic Interaction

Principle: The future of learning is not human or machine — it is human with machine.

What it looks like: Classrooms become hybrid ecosystems. Robotics programs evolve into human-machine partnerships. Role-playing scenarios explore “co-decision-making” with AI.

Why it matters: This is how students learn to govern intelligence rather than be governed by it. It prepares them to negotiate with AI, shaping a future where human purpose directs technological power.

Scaling

We already know how to build the foundations of human superintelligence. The challenge isn’t invention; it’s scale.

Just as AI achieved an intelligence explosion through massive scaling of data, computation, and feedback, we can ignite a human intelligence explosion by scaling the ecosystems that generate recursive learning.

When AI scaled, it didn’t just get bigger — it got qualitatively smarter. Scaling debate doesn’t just create more arguments; it creates ecosystems of reflection and rebuttal. Scaling robotics multiplies experimentation and failure. Just as scaling neural networks triggered an AI emergence event, scaling co-curriculars could trigger a human emergence event — a collective leap toward a civilization capable of co-evolving with its own intelligent creations.

AI systems like DreamGym and the emerging science of Autocurricula show that intelligence accelerates when environments evolve with the learner — when challenges, feedback, and goals continuously adapt to current capability. The same principle applies to humans: by scaling recursive learning ecosystems such as debate, robotics, and theater into dynamic “human DreamGyms,” we can create an autocurriculum for human superintelligence — an ever-advancing network where curiosity, collaboration, and reflection drive continuous emergence.

The Proof: This Is Already Happening

This shift from theory to practice is not a distant dream; it’s already beginning. Major educational institutions are recognizing this gap. The College Board, for example, is actively building a new program focused on these exact skills.

Here is an excerpt from their recent job posting for a “Product Manager, Work-Based Learning”:

The College Board is launching a new exploratory effort to design and scale innovative ways for students to build durable skills — the collaboration, problem-solving, and communication abilities that employers value and that students carry with them for life….This work sits at the intersection of education and the workforce — translating our mission into experiences that prepare every student to succeed in whatever path they choose. You’ll be part of a team creating something new: a program that brings the world of work into schools and helps students develop skills that last a lifetime.

As I’ve previously noted, these “soft skills” (now “durable skills”) are something the US Army started pushing in 1972. They remain relevant today.

AI is threatening entry-level jobs across industries, making it especially hard for Gen Zers to find work. Mollick — who has consulted with JPMorgan, Google, and the White House on AI usage — told Business Insider that he’s most concerned about whether we’re tackling the question of how to restructure jobs with enough urgency. As AI automates some technical abilities, soft skills are newly crucial for job applicants. Communication, leadership, and organizational prowess were among the top skills identified by Indeed’s Hiring Lab.

From Sorting to Superintelligence: Preparing for Economic Survival and Civic Reconstruction

“Humans have become optional inputs into the economy”

— Said Ismael

This isn’t just a technological shift—it’s an educational emergency hiding in plain sight. AI companies are no longer just building “tools” — they’re building replacements. For knowledge work. For physical labor. For power. For all the marbles.

The shift from “What do students know?” to “What can students become?” is the only approach that simultaneously -

(1) Prepares students for economic survival in an AI-dominated future

These machines won’t surpass us merely because they can process more data or translate 120 languages instantly. Their advantage lies in the structure of their learning itself—they continually build upon prior experience, improve through feedback, share knowledge across networks, and integrate reasoning, creativity, and memory in ways that compound over time. They are recursive learners: systems that learn how to learn.

The jobs that will remain—and the new ones that will emerge—won’t reward those who memorized standardized content. They’ll demand people who can:

Collaborate with AI systems while maintaining human judgment about what should be done, not just what can be done

Navigate ambiguity and make decisions when the playbook doesn’t exist yet

Iterate through failure rapidly, learning from what doesn’t work

Generate novel solutions by combining insights across domains

Work in friction-filled, high-stakes collaborative environments where diverse perspectives must be synthesized

This is human superintelligence: recursive, reflective, and social learning that reorganizes the mind rather than just filling it. It’s what happens when students argue through complexity, build things that can fail, learn from that failure, and emerge transformed by the process.

The sorting machine can’t prepare students for this future because it was designed for the opposite: compliance, reproduction of predetermined answers, individual performance on static tests. It trained humans to be reliable industrial components. AI can do that job better. If we continue sorting students based on how well they conform to standardized measures, we’re actively preparing them for economic irrelevance. We’re teaching them to compete on the exact dimensions where machines will always win.

(2) Identifies and articulates the worth of all people

The sorting machine created the “basket of deplorables” by design—it needed failures to validate successes, disposable people to justify the concentration of resources among the credentialed elite.

Cultivating superintelligence makes this classification system incoherent:

You can’t rank recursive learners on a stable hierarchy. When intelligence emerges through iteration and growth, there’s no fixed score that determines human value. The student who struggles initially might develop the deepest understanding. The one who “fails” the standardized test might ask the question that transforms everyone’s thinking.

You can’t dismiss cognitive diversity as deficiency. When real intelligence requires friction between different perspectives—when groups must “navigate the unpredictable dynamics of collaboration, judgment, and discernment”—then the minds that think differently aren’t problems to remediate. They’re essential to collective intelligence. The person the sorting machine labeled “deficient” might be exactly the cognitive diversity your team needs to break through.

You can’t justify extraction based on credentials. If intelligence is emergent and collaborative rather than individually accumulated and credentialed, then the tech executive can’t claim their Stanford degree makes them 1,000x more valuable than the “uneducated” worker. Real intelligence under this model is what you generate in collaboration with diverse others. It can’t be hoarded because it doesn’t exist in isolation.

You can’t maintain a permanent underclass when you need everyone. The cruel political genius of the sorting machine was that it gave elites permission to write off entire populations: “They had the same curriculum; they just didn’t measure up.” But when intelligence emerges through collaborative friction, excluding people isn’t just immoral—it’s self-sabotage. You’re cutting off the cognitive diversity that would force your own thinking to evolve. The “basket of deplorables” becomes an admission of your own limited intelligence.

This approach doesn’t just claim everyone has worth—it makes that worth functionally necessary. You need the friction. You need the diversity of thought. You need the perspectives that challenge your assumptions. Not as charity, but as the engine of emergence itself.

(3) Empowers students to rebuild society when AI forces fundamental restructuring

Here’s what the sorting machine’s defenders won’t admit: we’re not just facing job displacement. We’re facing the potential collapse of every assumption about how society organizes itself.

When AI can do most knowledge work, what justifies current wage structures? When machines can govern more “efficiently” than humans, what happens to democracy? When productivity explodes but humans aren’t needed to produce it, how do we distribute resources and meaning? When the credentialed class’s credentials become worthless because machines do those jobs better, what legitimizes their power?

These aren’t hypothetical questions—they’re the immediate crisis that hits when artificial superintelligence arrives. And the sorting machine leaves humans catastrophically unprepared to answer them.

The recursive, imaginative, and moral intelligences we’re now encoding into machines are the only human capacities capable of reconstituting civilization itself when economic structures, governance systems, and moral frameworks fracture under AI pressure.

Students need to develop the abilities to:

Reimagine economic systems when the relationship between work and survival fundamentally changes. What does human flourishing look like when traditional employment becomes optional or obsolete? How do we create meaning and purpose when productivity is decoupled from human labor?

Redesign governance when AI systems make most administrative decisions. How do humans maintain agency and democratic authority when machines are “better” at optimization? What are the ethical boundaries around algorithmic power?

Reconstruct social bonds when the economic ties that held communities together dissolve. How do we build solidarity when we’re not connected through shared labor? What forms of mutual aid and collective care replace employer-provided benefits?

Redefine value itself in a world where human contribution can’t be measured by productivity metrics designed for industrial capitalism. What makes a human life valuable when machines can outperform us on nearly every measurable dimension?

The Practical

And here’s the crucial point: this isn’t a call for radical educational disruption that requires burning everything down and starting over. We already know how to do this. We’re already doing it—we’ve just relegated it to the margins.

The places where human superintelligence actually develops aren’t hidden or theoretical. They’re the debate team, where students must argue through complexity and defend competing visions. The robotics lab, where teams build things that fail three times before they work. The theater program, where collaboration under ambiguity is the entire point. The maker space, where iteration and creative problem-solving are built into every project. The Model UN, the startup incubator, the journalism class covering real community issues, the research seminar where students pursue questions no one has answered yet.

We call these “extracurriculars.” We schedule them after school, fund them as optional enrichments, treat them as supplements to the “real” learning happening in standardized classrooms. We’ve built an entire educational architecture that says: the stuff that actually develops superintelligence is extra. The sorting machine is central.

The transformation we need isn’t radical—it’s a simple inversion: make the extracurriculars the curriculars.

Move the debate team from 3pm to 10am. Make the robotics lab the core engineering curriculum, not the after-school club. Turn the student newspaper into the literacy program. Make entrepreneurship, theater, research, and collaborative design the main event, and treat standardized content delivery as the supplement.

This isn’t naïve idealism—it’s recognizing what we already know works. The students who thrive in these environments aren’t succeeding despite the lack of standardized curriculum; they’re thriving because they’re learning through friction, failure, iteration, and emergence. They’re developing exactly the capacities that will matter in an AI world: collaborative intelligence, adaptive problem-solving, creative synthesis, ethical reasoning under uncertainty.

The infrastructure exists. The pedagogical knowledge exists. We’re not starting from zero—we’re reorienting what’s already proven effective. The question isn’t “How do we invent this?” It’s “Why are we still treating human superintelligence as extracurricular when it’s the only thing that will prepare students for economic survival and civilizational reconstruction?”

We don’t need another educational conference about AI literacy. We know that deep learning happens when students wrestle with real problems, create original work, and learn through dialogue, design, and discovery.

We need to scale human superintelligence before artificial superintelligence makes the sorting machine’s final verdict permanent—with the credentialed elite extracted to their enclaves and everyone else programmed by systems they had no hand in building.[tHE LAST ECONOMY: A Guide to the Age of Intelligent Economics]

The Superintelligence Center isn’t a luxury. It’s the last chance to ensure humans remain authors of their own civilization rather than subjects of someone else’s algorithm. And it’s not a distant vision—it’s taking what already works and finally putting it at the center where it belongs.

This is why cultivating superintelligence isn’t just better education—it’s survival preparation for a civilizational transition.

Without these capacities, humans won’t be the authors of the AI age—we’ll be programmed by it. The machines and the humans who control them will make the decisions about resource distribution, governance structures, and the boundaries of human agency. The rest will be sorted into whatever role the new systems assign them, just as the old curriculum did. It’s just that now all or nearly all will be sorted underneath the AIs. The choice is ours.

References

Coverstone, A. (2016). Intelligence Diversity and Educational Transformation Through AI Pluralism. In AI Pluralism: Fostering Diversity and Dialogue in Artificial Intelligence. Rao, P. Anand. editor. Publication: February 27, 2026.

Bauschard, S. & Quaidwai. S. (2014). Humanity Amplified: Understanding the AI World and Augmenting Our Students’ Intelligence with Human Deep Learning.

Meta, J. & Fine, S. (2019). In Search of Deeper Learning: The Quest to Remake the American High School