AI Is the Cognitive Layer. Schools Still Think It’s a Study Tool.

A New World

We are living through the birth of a new world—and most of education doesn’t get it.

Right now, the debate about AI in education is stuck in a narrow loop, circling three questions:

Should society use AI at all?

Should students be allowed to use AI to complete schoolwork?

Should we augment existing teaching and learning with AI tools?

These may sound like the right questions. But they’re not. At least, not anymore.

The first question is already obsolete. We no longer get to decide whether society will use AI. It’s already here—ubiquitous, ambient, and integrated into nearly every part of daily life. AI is embedded in Google search results, smartphones, glasses, Microsoft Office, Google Suite, appliances, and cars. Debating whether we should use AI is like asking whether we should use electricity or the internet.

It’s not a choice—it’s an infrastructure and short of living outside of modern society, we are going to “use” it.

The second and third questions are more subtle—but just as misguided. They assume that the educational model we’ve built is still entirely relevant, and that the right move is simply to enhance it. But what if the model itself is collapsing? What if augmenting outdated goals and irrelevant skills with cutting-edge AI simply accelerates our drift into irrelevance? Few want to ask the hard questions.

The real question isn’t whether to allow students to use AI, or how to plug it into instruction.

The real question is: How do we prepare students for a world where machines can think?

…And where they are better at thinking than we are…when they have more knowledge, when the are more likely to be right, when they can think and act faster…when the economic value of knowledge collapses to close to zero…

What type of education is relevant in this world?

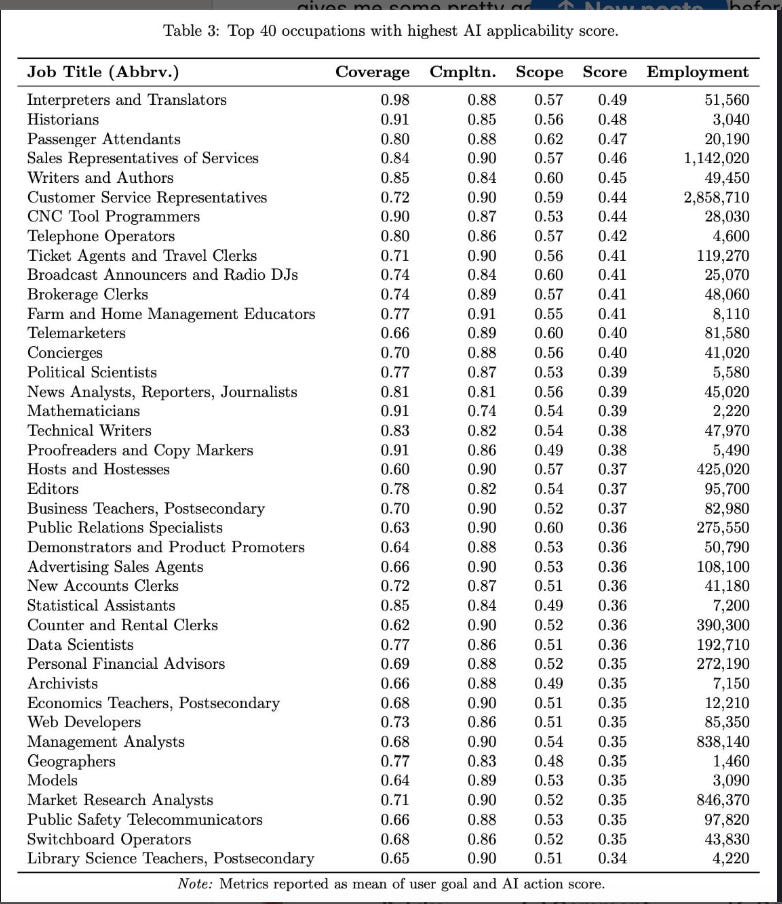

Here’s the larger list of jobs exposed to AI.

A New World is Arriving Quickly

The ground is shifting faster than most institutions can track. AI systems now possess access to more knowledge than any single human in history—instantly searchable, constantly updated, and cross-disciplinary in ways no mind can replicate. From scientific databases to legal code, literature to logistics, these models don’t just retrieve information; they synthesize, explain, and increasingly reason.

Many researchers now believe we are on a steep path toward Artificial General Intelligence (AGI).

OpenAI has already claimed progress toward reasoning across domains; Demis Hassabis, Ray Kurzweil, Ben Goertzel, and even Francois Chollet, who has always articulated conservative AI timelines, believes we will have AGI or something very similar (definitions vary) by approximately 2030. Some believe it will be sooner.

Even critics of exclusively LLM approaches to AI development (Gary Marcus, Yann LeCun) believe we will eventually develop AGI possibly on the same time frame: 5-20 (LeCun, 5-10 (maybe 3)) or (Marcus, 5-20) years. These frequent critics of the significance of LLMs simply believe that LLMs will only be part of the AGI infrastructure and that other approaches and models need to be integrated. This is something that most researchers also believe. For example, Google/Deep Mind, which generally projects a 5 year time frame is also working on the World Models LeCun argues for. Ben Goertzel, who has a 2028ish timeline believes that LLMs will only comprise around 30% of an AGI system.

Meanwhile, robotics is no longer crawling. General-purpose humanoid robots are walking, grasping, manipulating, learning from feedback, and being deployed into warehouses, hospitals, homes, and the military.

Students graduating today are stepping into a world that may be governed by hyperintelligence—systems that exceed not just memory and speed, but even judgment, in many domains. This is not a future challenge. It’s a current reality. We are already seeing layoffs, role shifts, and productivity gains in sectors touched by generative AI—from customer service to content production to law and software.

By the time today’s 9th graders and college freshman enter the workforce, the most disruptive waves of AGI and robotics may already be embedded into part society.

Energy limitations and ordinary diffusion bottlenecks will slow down distribution, but it’s already the the fastest adopted technology in history.

Education cannot afford to wait and see. We must prepare students not just to survive in this world, but to contribute something distinctly human to a human world.

You Don’t Just Add Electricity to a One-Room Schoolhouse

To understand how profoundly education is misreading the moment, it helps to revisit a previous general-purpose technology: electricity.

Electricity didn’t just improve the candle—it eliminated the need for it. It transformed every facet of life: labor, production, health, communication, transportation, entertainment, science, and more. It created entirely new sectors and social structures. It powered factories, night shifts, refrigeration, telecommunications, broadcast media, and computing.

And education changed with it.

We didn’t just hang a lightbulb in the one-room schoolhouse—we replaced the schoolhouse. Industrialization and electrification brought about the age-graded, subject-divided, bell-scheduled, standardized-mass-education model we now take for granted. We built that system not for philosophical reasons, but because the new economy demanded mass intellectual labor and civic participation.

Neither of those may be needed in the not-too-distant future.

Now we are in a moment just as consequential—and we are pretending it only requires new software licenses.

AI is not a tool.

It is the electricity of this era.

What replaces the old system will not simply be a more digital version of the same thing. Structurally, schools may move away from rigid age-groupings, fixed schedules, and subject silos. Instead, learning could become more fluid, personalized, and interdisciplinary—organized around problems, projects, and human development rather than discrete facts or standardized assessments.

AI tutors and mentors will allow for pacing that adapts to each student, freeing teachers to focus more on guidance, relationships, and high-level facilitation. Classrooms may feel less like miniature factories and more like collaborative studios, labs, or even homes—spaces for exploring meaning and building capacity, not just delivering content.

We already see the emergence of alternative K-12 systems designed along this lines in the form of the rapidly expanding Alpha School (US), where more and more technology leaders are educating their kids, and Colegio Ikagi (Mexico).

In terms of content, the curriculum will shift from memorizing information to making sense of it. AI systems students interact with all day will already know what they know, so there will be no need to test them.

Students may spend less time learning how to do tasks AI can perform—coding, writing research papers, even conducting formulaic experiments—and more time exploring emerging domains like synthetic biology, systems thinking, ethical reasoning, and multi-agent problem-solving. The question will not be “what do you know?” but “what can you do with intelligent tools—and what should you do?”

AI Is Now Ambient. You Can’t Ban It Anymore.

A few years ago, AI was something you had to go to. You had to log into ChatGPT or open an app and deliberately choose to use it. Schools could, in theory, block it. But that era is already over.

AI is now ambient. It’s integrated into your browser, your search engine, your smartphone keyboard, your email inbox, your car, your digital assistant, your creative tools. It is everywhere—not as a single tool, but as a layer under everything. And it's becoming more invisible, more embedded, and more personal every day.

Soon, students won’t even realize they’re “using” AI. It will be part of their search queries, their writing process, their glasses, their voice memos, their earbuds—and, increasingly, their thoughts.

And we are entering the era of brain-computer interfaces (BCIs).

Neuralink, Precision Neuroscience, and others are developing early-stage BCIs that allow humans to interface directly with machines via brain activity. While still in the experimental phase, the trajectory is clear: we are moving from a world where humans use keyboards to prompt AI to one where AI can respond to our thoughts, feelings, and neural patterns in real time.

What does it mean when AI is no longer something students use, but something they think through?

How do we talk about "academic integrity" when a student's ideas are co-generated with a machine inside or slightly outside their mind?

What does it mean to write a paper when your mind and a machine are co-authoring it in silence? How do we talk about "originality" or "learning outcomes" in that world?

What does it mean to “pay attention” when your brain is multitasking with a digital assistant?

How do we define cheating when it’s impossible to tell where a student’s thinking ends and the machine’s suggestions begin?

AI ambience isn’t just about visibility. It’s about proximity to cognition. And brain-computer interfaces take that to the extreme, but even without the extreme, the ambience creates proximity.

We’re not just integrating AI into the classroom. We’re integrating AI into the mind.

What does it mean to write a paper when your mind and a machine are co-authoring it in silence?

The closer AI gets to cognition, the more absurd it becomes to draw lines between "cheating" and "collaboration." Banning AI is like banning thinking itself. And schools must stop treating it as an external variable.

Education must prepare students for a world where AI is not just a tool—it’s a cognitive layer of human life.

Trying to ban AI in this context is like trying to ban thinking.

We need a radically new framework—not for controlling access to AI, but for preparing students to live with it, work with it, and critically reflect on what it means to share mental space with a machine.

The “stoplight model” of AI regulation—popular in schools but deeply flawed—imagines AI as a car at an intersection, something external that can be switched on (green), limited (yellow), or banned (red).

But this framing collapses once we recognize how AI is actually unfolding. It isn’t like flipping a classroom light switch that teachers can control. Increasingly, it’s like the lights themselves anticipating need—turning on when a student enters the room, dimming to match focus, or illuminating precisely what’s required. They lights are even starting to turn themselves on to prompt the student. In other words, AI is no longer a discrete tool but an ambient, cognitive environment.

The real regulatory question, then, isn’t whether students should “use” AI at all—it’s how education must adapt when AI becomes inseparable from the very act of thinking.

From Passive Tools to Agentic Minds

For most of its history, AI has been seen as a tool—a passive assistant that responds to human commands. But that era is ending.

We are now seeing the rise of agentic AI: systems that don’t just generate answers, but pursue goals, plan sequences of actions, and adapt their strategies based on feedback. Think of GPT-based agents that can autonomously execute a task across the internet—booking flights, ordering supplies, managing inboxes, even writing and debugging code without continuous human oversight.

These aren’t just algorithms—they’re emergent actors.

And yet, our educational assumptions are still built around the idea that students will be the initiators of action, the drivers of productivity, the ones who set the goals and do the work. But what happens when that role belongs increasingly to machines?

What does it mean to teach "executive functioning" in a world where machines execute?

What does it mean to prepare students for leadership when autonomous agents will make thousands of micro-decisions a day in logistics, finance, law, and medicine?

We're not talking about losing a few jobs. We're talking about replacing agency itself—and education has not yet grasped what that means.

If students are no longer the default source of action, then we need to teach them to:

Design agents,

Collaborate with agents,

Align agentic systems with human values,

And most of all, retain moral and civic agency in a world where machines act on our behalf.

We are no longer educating students to be just doers.

We must now educate them to be judges, designers, and stewards of agency.

The Illusion of Relevance: Why Curriculum Needs a Total Overhaul

Education is not just clinging to outdated structures—it’s clinging to outdated content, and pretending it’s still relevant.

We’re still teaching coding, graphic design, spreadsheet skills, and video editing like they’re golden tickets to upward mobility. But AI already:

Writes better code than most students

Designs logos and builds websites in seconds

Composes music, animates stories, edits videos, and builds slide decks from prompts

We’re preparing students for jobs that AI is already eating alive.

But instead of confronting that truth, we keep doubling down—layering AI on top of collapsing skills and calling it innovation.

This doesn’t have to be a loss. It can be a liberation—if we rethink relevance.

Math

As explained by Dr. Maggie Renken, for generations, American math education has marched students down the same narrow path: Algebra → Geometry → Algebra II → Precalculus → Calculus. This “calculus march” was defended as mental training, cultural literacy, and a gatekeeper for STEM careers. But AI has changed the landscape. The symbolic manipulation that once defined advanced math—the algebraic tricks, the multi-page proofs, the hand calculations—can now be done in seconds by machines. By 2035, AI won’t just be solving our equations; it will be inventing entirely new mathematics to tackle problems we can barely imagine today—climate modeling, food security optimization, poverty reduction algorithms.

In this new reality, the rare and valuable human skill will no longer be calculating the answer but knowing what questions to ask, how to frame them, and when to distrust the outputs. The future of math education must focus on applied reasoning, systems thinking, and ethical judgment in an AI-saturated world. Students need to learn the math of power—the math embedded in algorithms shaping credit scores, healthcare, climate risk, and economic opportunity.

That means reimagining the curriculum. Instead of treating calculus as the universal pinnacle, we should prioritize pathways like data literacy, probability and statistics, graph theory, networks, discrete math, and systems dynamics. Instead of assessing fluency with “plug-and-chug” homework, we should evaluate the quality of reasoning, the clarity of assumptions, and the ethical implications of models. Instead of one rigid progression, we should open multiple on-ramps and capstones—climate modeling, social network analysis, sustainability optimization—so every student graduates able to read, critique, and shape AI-driven decisions.

Coding and Computer Science

Nowhere is this transformation more immediate than in the way we teach coding and computer science. For years, we treated programming as the new literacy—a foundational skill for participating in the digital economy. But what happens when AI can code better, faster, and in more languages than any human? When it can debug, refactor, generate entire applications from natural language prompts, and even explain its own output in real time? Teaching students to write Python line-by-line may soon resemble teaching them to operate a printing press—valuable for historical context, but no longer central to economic or creative relevance

This doesn’t mean we should abandon computer science. But it does mean rethinking why we teach it. Rather than focusing on syntax and execution, curricula will need to emphasize systems thinking, architecture, human-AI collaboration, and the ethical design of intelligent systems. Students will need to understand how large models work, how to assess and constrain their behavior, how to communicate with them, and how to evaluate their outputs. The future of CS education is not about turning every student into a software developer—it’s about preparing them to shape, interrogate, and govern the intelligent infrastructure of the 21st century.

Coding as a skill doesn’t disappear—it evolves. It becomes less about mastering languages and more about learning how to ask better questions, design higher-order workflows, and debug systems of meaning. The human role shifts from writing code to directing, critiquing, and contextualizing machine-generated solutions. If we cling too tightly to the old vision of programming as manual labor, we risk preparing students for jobs that no longer exist. But if we open our eyes, we can teach them to become not just builders of technology—but stewards of its impact.

English

English class has long been the cornerstone of humanistic education—where students learn to express themselves, interpret complex texts, develop arguments, and explore meaning through narrative and analysis. But AI has entered the conversation. Language models can now write essays, analyze Shakespeare, draft college admissions letters, summarize articles, and simulate persuasive rhetoric in digital contexts that rivals, and often surpasses, people.

The traditional justification for assigning five-paragraph essays and literary analysis papers is crumbling: if a machine can do the task in seconds, are we still teaching thinking—or just grading outputs?

But the solution is not to abandon English—it is to radically deepen it. In an AI-saturated world, the value of English lies not in mimicking formal structures or performing rote tasks, but in cultivating authentic voice, human nuance, and interpretive judgment, even if that is now intrinsically tied to AI-collaboration.

We must move beyond teaching students to write like machines and instead help them explore how humans use language to connect, persuade, comfort, and imagine. This means embracing oral storytelling, dialogue, multimedia expression, and deep reading not just for comprehension, but for moral discernment. It means helping students develop the ability to question all sources (AI and others), recognize bias, and understand how language shapes our emotional and ethical lives.

Writing becomes not just a performance of grammar and form, but an act of human anchoring in a world awash in synthetic speech. Reading becomes less about extracting answers and more about building the capacity for empathy, complexity, and sustained attention.

English class, in this future, is not obsolete—it is essential. But only if we are bold enough to reimagine it not as training for standardized tests, but as a workshop for human meaning-making in the age of machines.

Toward Wonder, Design, and Radical Possibility

What if we stopped pretending our job is to make students marginally more employable—and started treating them as co-creators of the future?

Let them:

Design an interactive TV show where viewers can change the ending

Build a synthetic species and defend its ecological impact

Simulate an alternate history with generative media

Create an AI-generated musical that adapts to audience mood

Lead a synthetic social movement and test its ethical boundaries

These are not science fiction. These are the kinds of creative, ethical, interdisciplinary challenges that AI can’t fully do alone—and likely never will.

Don’t teach students to do what AI can already do.

Teach them to imagine what AI can’t even conceive of yet.

The Ethics Debate Misses the Bigger Picture

Yes, AI raises serious ethical issues. But the current educational discourse often fixates on binary questions—should students be allowed to use AI? Is AI good or bad?

But we don’t have entire classes on the ethics of electricity or fire. We don’t frame the internet as a binary moral dilemma. Instead, we teach students how to navigate these technologies wisely, and in context.

We must move past the “should we use it” phase. AI is not something we can isolate or quarantine—it’s part of the foundation now. And while ethical reflection is crucial, it cannot replace the much deeper and more difficult task: rethinking what students need to learn in a world transformed by thinking machines and what instances of it are good and bad.

Students Question Value

These questions are no longer theoretical—they are starting to weigh heavily on the minds of students themselves. Many are asking, sometimes quietly and sometimes aloud: What’s the point? Why master a skill an AI can do better? Why sit through classes designed for a world that’s vanishing?

While institutional and parental awareness has lagged behind, a shift is underway. Students are increasingly aware that they are being prepared for jobs and systems that may not exist by the time they graduate.

In a widely circulated story, a student recently dropped out of MIT—not for lack of ability, but because they could no longer see the relevance of education in a world racing toward AGI.

For many students, especially those deeply immersed in the technologies transforming our world, the emotional and psychological toll of this dissonance is growing. They’re not just anxious about grades—they’re anxious about meaning. They are searching for a new story of purpose, one that education has not yet given them.

And their schools are giving AI the silent treatment.

Higher Education

We don’t just have a K–12 problem. We have a higher education mirage—a system of prestige, competition, and credentialing that no longer aligns with the reality unfolding around us.

For decades, the logic has been simple: K–12 prepares students for college, and college prepares them for careers. But what happens when the careers those students are preparing for no longer exist? Or when those careers are flooded by intelligent systems that outperform humans at core job functions?

If college is no longer a reliable pathway to economic stability or intellectual distinction, then what is K–12 actually preparing students for?

And beyond misalignment, we must confront the uncomfortable truth that the value of higher education has been artificially propped up by scarcity. Students are told to compete for access to elite universities, which serve as gatekeepers to opportunity—not because they offer experiences that can't be replicated, but because access is limited. The whole game is built on exclusion.

But AI disrupts that scarcity.

If an elite professor’s lectures can be cloned and improved, if their office hours can be simulated, if a world-class curriculum can be delivered on-demand to anyone, anywhere—for a fraction of the cost—then what happens to that competitive motivation?

Shouldn't we be offering the best learning experiences to everyone, not just the few who win the admissions lottery?

And if AI can deliver the best K–12 instruction for pennies, why are we still rationing educational excellence?

In a world of abundance, continuing to build education on the illusion of exclusivity is not just inequitable—it’s intellectually dishonest.

The real question is no longer “How do we get more students into elite institutions?”

It’s “How do we redefine learning in a world where the best can be replicated—and shared?”

The End of the Knowledge Worker Economy?

For decades, education was designed to feed the knowledge economy. Standardized tests, college admissions, academic publishing, and professional credentials were all part of a system meant to prepare students for intellectual work.

But when machines can do that work, what remains?

Maybe it’s care. Maybe it’s relationship. Maybe it’s context, ethics, and presence. If machines can out-write, out-publish, and out-analyze humans, then maybe what’s left is what makes us human.

We may soon find that the most economically valuable roles are the most emotionally intelligent ones:

Caring for the elderly

Supporting children

Mediating conflict

Designing communal rituals

Creating meaning in a world of abundance

Are we preparing students for that?

The Human Core: What Still Belongs to Us

Threaded throughout this essay are glimpses of a new center of gravity for education—one that doesn’t deny AI’s power, but instead reclaims what it means to be human alongside it.

We’ve explored how AI is developing agency, fluid intelligence, and ambient presence. We've seen how it’s replicating knowledge, creative output, and even forms of reasoning. But in that rapid expansion, we also see something narrowing—something essential that education must now hold onto and elevate with greater urgency.

These are the traits that machines still do not—and perhaps may never—possess in full:

The ability to show judgment amid moral complexity

The inclusion of lived experience and human context in reasoning

The capacity to pose new questions, not just solve existing ones

The navigation of emotion, culture, and ethical dilemmas in real time

And even if AI eventually can simulate these abilities—can act like it understands morality, culture, and emotion—the distinctly human dimension remains irreplaceable.

Because judgment is not just a computational output—it’s something we extend to each other in relationship and community.

Because context isn’t just information—it’s what it means to live a life, to have history, memory, and perspective.

Because posing a new question is not just clever—it’s an act of meaning-making, curiosity, and sometimes courage.

Because navigating human emotion and ethics is not something done in abstraction—but with and for other people.

These aren’t just skills. They are the fabric of human interaction, and they depend not only on cognition but on presence, trust, and shared vulnerability.

Even if machines can demonstrate these traits in isolation, their value emerges most fully in human-to-human contexts:

A student feeling seen by a teacher’s moral discernment

A classroom grappling with ethical ambiguity together

A community finding common ground through shared emotional understanding

A young person learning not just how to reason—but how to matter to others

This is what still belongs to us.

And this is what education must now double down on—not as a nostalgic retreat into the past, but as an affirmation of what we still need most in a future shaped by machines.

In a world where intelligence is abundant,

relational humanity may be the rarest resource of all.

The Beginning of the Human Worker

1. Show judgment amid moral complexity

Similar questions might include:

How do we make good decisions when the right answer isn’t clear?

What values should guide us when outcomes are uncertain or competing?

How do we weigh competing ethical claims in high-stakes situations?

What do we do when different people or cultures see a situation differently?

What’s the right thing to do when the consequences might hurt someone?

These questions speak to ethical reasoning, moral courage, and philosophical judgment, often developed in humanities and civics education.

2. Include lived experience and context in reasoning

Similar questions might include:

How might someone’s background change how they understand this issue?

What blind spots might I have because of my own perspective?

How does history shape the meaning of this situation?

What’s missing from this analysis that a real person might see?

These highlight empathy, cultural literacy, social context awareness, and epistemic humility—and are central to justice-oriented education.

3. Pose new problems instead of just solving them

Similar questions might include:

What question should we be asking?

Is the current way of framing this problem the best one?

What issues are we overlooking because we’re focused on the “solution”?

How can I reframe this situation to uncover new opportunities?

This focuses on creative thinking, paradigm-shifting, and metacognitive insight—traits often nurtured in entrepreneurial, design-based, or philosophical settings.

4. Navigate human emotions, cultures, and ethical dilemmas

Similar questions might include:

How might people feel in this situation, and why?

What cultural assumptions are shaping how this is playing out?

How do we move forward when people disagree strongly?

What role does compassion play in leadership or decision-making?

These emphasize emotional intelligence, intercultural understanding, and relational wisdom—areas where AI may simulate behavior but not possess genuine depth or moral weight.

What Can We Do?

This is not a time for tweaks. It’s a time for transformation.

What School Leaders Might Do

Recenter your purpose. If the future of work is uncertain, let the mission of school be preparing people to live meaningfully with others in a world shaped by AI.

Audit your curriculum. Ask not just “what are we teaching?” but “who are we helping students become?”

Create space for emotional and ethical development. These aren’t side programs. They are core to the human strengths that remain essential—even if AI gets everything else.

Protect the human center. Don’t just integrate AI—invest in community, in dialogue, in presence, and in trust.

What Curriculum Designers Might Do

Design for human judgment, not just performance. Ask students to wrestle with real moral and emotional complexity—not just produce clever outputs.

Build context-rich, relational tasks. Encourage storytelling, conflict resolution, lived-experience reflection, and collaborative meaning-making.

Include questions AI can’t answer well. Not because they’re hard, but because they’re human: What should we care about? What is fair? What does this feel like?

Reclaim the classroom as a place of shared inquiry. Design experiences where learning is rooted in conversation, contradiction, and compassion—not just content.

What Individual Teachers Might Do

Talk about the human side of learning. Ask students not just what they know, but what matters to them—and why.

Model uncertainty, empathy, and care. You are not just a knowledge resource. You are a relational presence. That may become the most valuable thing you offer.

Create learning spaces that are emotionally alive. Let students feel safe to share, to fail, to struggle, and to connect. Machines don’t do that. People do.

Celebrate the distinctly human. When a student demonstrates discernment, shows compassion, or names a moral dilemma—pause and praise it. These are the moments that machines can’t replicate and that society will increasingly need.

Final Thought

The world our students are entering is not an upgraded version of the past.

It is something entirely new.

We are no longer the sole producers of knowledge.

But we are still the ones who bring judgment, context, and meaning to it.

We are still the ones who ask why it matters, for whom, and at what cost.

We are still the ones who care—and teach others to care, too.

Even if machines can simulate insight, only humans can extend human-to-human empathy.

Even if machines can solve problems, only humans can build human-to-human relationships.

Even if machines can replicate minds, they cannot replace human hearts.

So no—education is not obsolete.

But it is off course.

And this is our moment to turn toward what still belongs to us:

The human.

The relational.

The moral.

The imaginative.

The shared.

This is not a time to add AI to education.

It’s a time to reclaim education for the age of AI.

Because in a future filled with machine minds,

human connection may be the last truly transformative technology we have.

My question is: How do we prepare ourselves so we can prepare students for a world where machines can think?